Disasters take practice

This weekend's news reports of earthquakes, tsunami warnings, all of this on top of winter snowstorms made me think about DR/BCP and procedures. I realize that auditors require a DR program to be written, but can you really run your organization on that document? I've seen some doozies.

Do you test your DR program annually? In order to be effective, testing must take place annually. No one really wants to do a DR exercise. No one is going to beat your door down to volunteer for a practice run. You may have to get creative to get involvement. Have a contest to come up with the disaster scenario. Creativity can be enhanced by various meeting dynamics and techniques. Get some people from HR, PR and legal to share the task. If you try really hard, you can make it just a little bit more fun and gain some comradery and teamwork that will payoff if you do experience a significant event.

I realize that some smaller businesses just may not have the time to invest in a full fledged exercise. (Please try.) If you feel you fall in this category, and you just can not carve out the time, you owe it to yourself to make sure every position in your IT operations department documents their daily activities in procedure format.

Some examples are below, I know you can think of a lot more that keep you up at night.

What steps does your backup operator take to perform a restore?

Where are the backups stored and how often are the audited for accuracy?

How often are your security programs monitored?

What is the procedure for a DoS? Virus infection? Disgruntled employee?

Who on your legal team would you call? Do they know you?

Is your incident response team call sheet up to date?

Bad news is just a headline away for someone, resolve to be prepared.

Mari Nichols, Handler on Duty

Search Engine Poisoning: Chile Earthquake

You probably heard about the major earthquake in Chile happening last night. So have the malware writers engaged in search engine poisoning. Search Google for "Chile Earthquake" and you will find a number of malware site or sites like "Qooglesearch.com" on the first page. As regular charities start to use these keywords, the poisoned results may be pushed back a bit and show up under other related keywords.

As usual, let us know if you find any odd sites related to this. So far the only thing I am seeing is the fake AV / malware push via search engine poisoning.

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

0 Comments

PHP 5.2.13 Security Update

PHP released PHP 5.2.13 which fixed over 40 bugs including 3 security fixes with the changelog located here with the packages located here.

-----------

Guy Bruneau IPSS Inc. gbruneau at isc dot sans dot org

Si tu desires assister au cours SANS SEC 503 en français en mai 2010 à Nice, France, suis ce lien.

0 Comments

New version of dnsmap

dnsmap v0.30 has been released. For those of you who are not familiar with dnsmap it is a DNS reconnaissance tool useful in the reconnaissance phase of penetration tests. dnsmap can be used to reduce the amount of manual effort required to do DNS enumeration and discovery and often reduces or eliminates the traditional whois lookups and scanning.

More information is available at the GnuCitizen Blog

From the blog, here is a list of some of the new features.

- IPv6 support

- delay option (-d) added. This is useful in cases where dnsmap is killing your bandwidth

- ignore IPs option (-i) added. This allows ignoring user-supplied IPs from the results. Useful for domains which cause dnsmap to produce false positives

- changes made to make dnsmap compatible with OpenDNS

- disclosure of internal IP addresses (RFC 1918) are reported

- updated built-in wordlist

- included a standalone three-letter acronym (TLA) subdomains wordlist

- domains susceptible to “same site” scripting are reported

- completion time is now displayed to the user

- mechanism to attempt to bruteforce wildcard-enabled domains

- unique filename containing timestamp is now created when no specific output filename is supplied by user

-- Rick Wanner - rwanner at isc dot sans dot org

0 Comments

Microsoft, restraining orders, and how a big botnet (waledec) ate curb.

*Disclaimer: The title may not end up being 100% accurate, as parts of Waledec may resurface at some point in time.*

Microsoft just broke some major ground in the fight against botnets (Waledec in this case) by executing civil legal action against a botnets owners to get domain names pulled. While this may not sound very sexy or amazing on the surface, it is in my view an extremely important step in the fight against these threats. For the first time an organization that is effected by malicious code has taken it upon themselves to protect not only their reputation, but the resources and reputations of their customers as well by leveraging the civil legal system.

See the trouble with Waledec is that all the domains that it used for C2 were hosted in .com/.net TLD's. Well verisign is somewhat notorious for only removing domains under court order, no matter how blatant the criminal activity. While this stance is semi understandable from a legal perspective (if you are IMHO a lazy lawyer), it really has little place in the current threat landscape (or even domain industry). Over the last 3-5 years there has been an increasing number of registry and registrars who have put in place proper abuse mechanisms (including legal/technical frameworks to deal with liability issues) to deal with malicious domains. Some TLD registries go so far to pro-actively monitor their domain space for malicious activity, taking down the sites during the first few minutes/hours of its life.

As this industry has moved towards this sort of self policing (lets not forget, that regulation/policy scares the mightiest of CEO's), there has been one 900lb guerrilla that has held out. Within the domain industry the lack of initiative from Verisign has meant a much slower adaptation of these sorts of policies and procedures within several ccTLD's (country TLD's) who have used Verisign's as an example . This has of course opened up an opportunity for ICANN to play a role in helping the industry along (http://www.icann.org/en/announcements/announcement-2-12feb10-en.htm ). If only Verisign could be made to understand the impact that their acceptance of these processes would have in curbing the rampant flow of criminal activity in their domains. Hopefully the senior leadership will recognize that a bit of their legal departments time in being creative and proactive may save them a whole lot of face. It is pretty bad when two organizations (MS and ICANN) that have been classically way behind the curve on security related issues are so far ahead of the one organization that prides its self in "selling security" (verisign).

Don't get me wrong, Verisign has done a lot of things right in regards to its participation in this sort of activity in the past (Conficker Working Group comes to mind). It just reserved that ability for the exceptional circumstances like conflicker. It is my hope that MS has cleared the path to more organizations to leverage this ruling to achieve the same goals. In a perfect world Verisign would simply have in place some of the same (or similar) controls to mitigating malicious domains in its domain space.

There no doubt will be more details that come forward on the technical aspects of these actions as was not the only party involved in this effort. As those details come out the list of Kudos will no doubt grow to encompass academics, non profits, and commercial organizations. As the technically inclined may already know, Waledec has a mult-tiered command and control setup (direct http c2, as well as p2p) which was no doubt addressed in this effort. (read below for some reading on the p2p side of Waledec)

So for what it is worth, kudos to Microsoft for leveraging its legal pit bulls for good! Thanks should also be given to those who worked behind the scenes to make this happen with their technical analysis and countermeasures.

To remove Waledec from a machine, feel free to use Microsoft's free Malicious Software Removal Tool located at the link below.

http://www.microsoft.com/security/malwareremove/default.aspx

You can read more about this

http://blogs.technet.com/microsoft_blog/archive/2010/02/25/cracking-down-on-botnets.aspx

Actual court paperwork can be found here. (interesting read)

http://www.microsoft.com/presspass/events/rsa/docs/complaint.pdf

Waledec p2p paper/info

http://honeyblog.org/archives/44-Walowdac-Analysis-of-a-Peer-to-Peer-Botnet.html

5 Comments

Pass The Hash

I've always loved the offensive side of security. Give me permission and a network to break into and I'm a happy guy.

One of my favorite techniques is the "pass the hash" attack.

Why bother spending precious time cracking a password if you can simply provide the target system what it's already expecting, a hash?

Recent tool advances make this a much easier attack to perform than it has been in the past and it is more likely than ever that attackers are using this technique on your systems.

Bashar Ewaida completed a nice Gold paper on the subject in the Sans Reading Room.

If you're not familiar with this technique, the tools that can be used or how to mitigate the attack, take a look at Bashar's paper.

Christopher Carboni - Handler On Duty

0 Comments

What is your firewall telling you and what is TCP249?

As part of our operational processes there is typically a line item stating "Review Firewall Logs". This is a requirement in many different standards and most people will have it down as a task to do. However we all know that there are way more interesting things to do rather than looking at log files. Unless you have some nice tools, it is one task that soon sends junior mad.

In trying to save Junior's sanity and basically because I am one of those people that actually likes looking at logs (I know, I have no life) I was going through some firewall logs. They never disappoint. There are the usual port scans happening for various ports:

217.10.127.105 13845 aaa.bbb.ccc.0 22 TCP

217.10.127.105 13845 aaa.bbb.ccc.6 22 TCP

217.10.127.105 13845 aaa.bbb.ccc.21 22 TCP

217.10.127.105 13845 aaa.bbb.ccc.12 22 TCP

217.10.127.105 13845 aaa.bbb.ccc.20 22 TCP

217.10.127.105 13845 aaa.bbb.ccc.10 22 TCP

217.10.127.105 13845 aaa.bbb.ccc.41 22 TCP

...

The usual hits on ports 135/137/139/445/1433/1434/25 can be found in the log file and there are the at the moment plenty of hits on 3306 for MySQL. A more unusual port to be hit was UDP 7 and TCP 249. UDP 7 was associated with some checks from a University (http://isc.sans.org/diary.html?storyid=4660), but I have yet to confirm this is the case here. TCP249 however isn't that common and If you do have some captures of traffic to TCP 249 I'd be interested in seeing them. There were also a number of high ports being hit, after chasing these down, in this environment, they were associated with torrent and Skype traffic.

It is also interesting to check the outbound traffic. Having a look to see what is trying to leave the network can also be enlightening. Torrent traffic seems to be fairly prevalent in the the logs I'm looking at, alas all it highlighted is that we'll have to do a regular dump of the NAT table so we can correlate the info and tag the internal user that is being silly. The logs can show you which machines talk to the internet and for what reason. They teach you what is normal in your network, something not easily achieved by using automated tools. People are still better than machines at identifying "weird". If you have the capacity you should consider logging not just denied traffic, but also allowed traffic on the firewall. Many attacks will try and sneak through, if you log all traffic you may be able to identify it.

When junior starts to shake and make strange noises that indicate the removal of all sharp objects from the office is needed, take a walk through your logs and get a feel for the "street" as it were. I can guarantee you will learn something. It may be something as exciting as a new attack, or as mundane as finding out some of your processes aren't working or being followed. You could even discover that some of your expensive tools aren't quite telling you the whole truth. Every now and then, take out your command prompt, find the grep man page and go nuts.

Enjoy

Mark H

5 Comments

Not Every Cloud has a Silver Lining

After our post last week ( http://isc.sans.org/diary.html?storyid=8251 ) defining the various "as a Service" terms that are commonly meant when people say "Cloud Computing", there was a spirited discussion around the security aspects of each. I felt it was important to summarize this discussion for our readers, please feel free to add to this topic (or disagree with me) using the comments feature.

So Why are Public Clouds Good?

Public IaaS services are great in several situations:

- All of the "aaS" service models make great sense for any business that needs to reduce IT costs - just be sure to factor in all the cost and risk factors in when making your business decision

- you have a temporary need for computing power, and can farm out some servers as VM's temporarily

- you are not under strict regulatory control that requires audit of the hypervisor in virtual environments

- you are hosting public data

- research, or any other requirement really that needs computing horsepower, but does not house sensitive data

So What Issues Should be Considered Before moving a service to a Public "aaS" Service?

IaaS (Infrastructure as a Service)

The issue that comes up over and over is that if your organization outsources computing resources to an IaaS platform, your data and business applications go with it. Quite often, the protections you might have in place for these valuable resources "at home" - for instance, an IPS (Intrusion Prevention System) or DLP (Data Loss Prevention) solution - do not go with them when they are moved into the cloud.

From an Audit perspective, the disposition of any guests in an outsourced virtual infrastructure is generally not known - it would be common to have virtual machines from several customers running on one physical server for instance. The whole concept of data classification, host classification and network trust zones is very hard to enforce and audit, aside from trusting the management interface of the IaaS provider and logical separation within the Hypervisor.

Also from an Audit perspective, the entire Hypervisor layer is not available to be audited at all. Customers of an IaaS provider have to trust their contract language and the technical security skills of the IaaS provider, and specific security settings generally cannot be known.

On the legal side, your data may be in a different legal jurisdiction than the one your company operates in. This has implications on liability, search and seizure and privacy issues. Not only that, but the advanced virtualization functions used by IaaS providers may mean that your VMs might migrate to another datacenter entirely in the event of a failure in the IaaS infrastructure. For instance, a disk or communications failure in an Atlanta datacenter might prompt an automated migration of your VM to a datacenter in the EU (with associated changes in the legislation around privacy, search and seizure)

PaaS (Platform as a Service)

The PaaS model targets the core applications within a company - ERP, shop floor control, warehouse management, sales and inventory applications, things like that. Because the underlying infrastructure for PaaS is very similar to IaaS, all of the same issues apply. A new issue that PaaS brings to the table is "getting your keys back". Once you've outsourced your business application to a third party, getting it back out again might not be as easy as you think. Since in a PaaS model, the customer writes the actual application, so the data formats should not be an issue in a migrate back out. But you will need to mount a project that migrates the data and application back to your hardware, and chances are good that hardware will no longer exist. In fact, I've seen clients outsource their entire datacenters, then have to start over from scratch.

SaaS (Software as a Service)

In most SaaS applications, "getting the keys back" is an even larger issue. The Google Apps SaaS platform does a really great job of "saving as" to common formats, other business-application type SaaS services don't always permit easy export of stored data to a common format in an accessible location.

Privacy settings within SaaS infrastructures have been under the microscope in the last year. For instance, many of the original Google Voice adopters found that they had inadvertantly set their voicemail to "publically searchable" - a setting that I would suspect almost no-one would want. Gmail has only recently (Jan 2010) moved to make HTTPS access their default.

Loss of control is another issue that has a good illustration in this space. In the fall of 2009. a bank employee sent a file containing personal and financial information for over 1300 customers to the wrong gmail account. Sending this kind of information unencrypted over email is probably a clear regulatory violation, but let's not get hung up on that. After realizing their mistake, they emailed the mistaken account, asking that person to delete the first email without reading it. After not receiving an answer, they contacted Google, who (quite rightly) asked for a court order. The bank sued, and got the account in question deactivated. What this illustrates is that if you are using any of the "as a Service" models, your data isn't as much yours as it used to be, and you might be subject to legal rules .

In all models, remember that bandwidth in a local datacenter is often at 1Gbps or 10Gbps. Once moved into a service cloud, access to data might be more convenient, but will certainly be slower.

Transport to our computing and data resources is now over the internet rather than over a private network. While the BGP based routing architecture of the internet lends itself to routing around trouble spots, it's not unusual to have intermittent communications issues, localized outages due to fiber cuts or even widespread outages. Remember - even 99.9% uptime is still almost 9 hrs of down time per year.

After all effort on nac and 2 factor most "as a Service" infrastructures bring us back to simple Userids and Passwords for access to our computing and data resources. Just keep in mind that in this model, a combination like "jsmith / m0nday" could be all that stands between your data and your competitor.

So, to sum things up, IaaS, PaaS and SaaS services all offer great solutions for business, but none of them come without risk. It's important to actually *read* your contract with these issues in mind before signing up, both from business risk and legal perspectives.

=============== Rob VandenBrink, Metafore ===============

7 Comments

New Risks in Penetration Testing

In a recent IPS (Intrusion Prevention System) deployment, I noticed that the newest version of the OS for the appliance I was putting in had a new feature - "Reputation Filtering". How this works from a customer point of view is:

- if an inbound attack is seen, the IPS reports the attacker and the attack back to the reputation service. This affects the reputation of the attacking IP address

- The reports of all users of the reputation service are aggregated, and attackers are "scored"

- Traffic inbound into the network is evaluated against the reputation database, such that traffic from lower reputation addresses is penalized from an IPS detection perspective

Since I work on the attack side of the things as well as the defence side, this got me thinking about Penetration Testing and Vulnerability Assessments. This now means that when Pentesting, care should be taken in selecting the public ip address that you mount attacks from. If you attack from home or from a free desk at work, you may find that because of this new Reputation Filtering feature, you've just blocklisted an IP address that you need every day to do "real work". You might be blocklisting your entire company, or even worse, your spouse (from personal experience - you just never want to do this ! ).

This adds another factor into the process of deciding where exactly you should run a Penetration Test or Vulnerability Assessment from. Other factors might include:

- ensuring that your ISP does not filter suspicious traffic, or in fact any ISP between you and your target

- ensuring that your activity is actually legal on all ISP's between you and your target

- if using GHDB (Google Hacking Database) methods, you can blocklist your public IP with Google (spouses hate this too!)

- If the client uses load balancers, you may find that subsequent tests might be against different hosts

All these factors conspire to move your penetration test or vulnerability assessment as close as possible to the target systems. Using the same ISP as your target is often a reasonable solution, but if you can negotiate it, using a free ip address and switch port on your target's external network takes care of a many of these issues nicely.

=============== Rob VandenBrink, Metafore ===============

10 Comments

TCP Port 12174 Request For Packets

On a very slow day, I have had a chance to catch up on some of the trends over the past few months, and something interesting caught my eye: A severe upshot in port 12174 traffic over the past 48 hours has appeared on the radar. I know TCP Port 12174 has appeared a few times in the past; I am checking to see if this is an old dog, or an old dog with new tricks. If anybody has any packet captures for this port please submit them via the Contacts page.

Thanx,

Tony Carothers

tony d0t carothers at isc,sans,org

0 Comments

Looking for "more useful" malware information? Help develop the format.

The "Introduction to MAEC White Paper" (Malware Attribute Enumeration and Characterization) has been released.

The paper describes the continuing development of "a standardized language for encoding and communicating high-fidelity information about malware based upon attributes such as behaviors, artifacts, and attack patterns". The paper includes "discussion of our Conficker characterization and problems/issues we face in the development of MAEC that may be of interest".

If you're interested in participating, even occasionally via list participation, it's appreciated  . Contact information is at the MAEC Working Group site.

. Contact information is at the MAEC Working Group site.

Malware Attribute Enumeration and Characterization (MAECâ¢)

0 Comments

Cyber Shockwave

At 8 pm EST (0100 UTC) on February 20th and 21st CNN will air a program called "Cyber Shockwave" which was filmed last Tuesday in Washington, D.C. I was invited to be in the studio audience during the taping of the program. I am frankly disappointed with the way it turned out. First, the scenario used as a backdrop is not realistic. The presumption is that a smartphone application is used to crash large portions of the nation's cellular phone system, which then leads to outages in the POTS (plain old telephone system) networks, which leads to loss of air traffic control, disruptions at the New York Stock Exchange, and massive power outages. As most of our readers know, such a cascading effect across multiple networks and systems is not likely. Not saying it's impossible, just not likely. The second issue is the fact that the people playing the role of National Security Council members failed to recognize the role of the private sector until well into the second hour. The government does not own or operate the communications infrastructure in the United States. To leave the private sector out of the conversation is a massive oversight. To be fair, the panel does recognize that the private sector has a role, but it comes after a long deliberation about how helpful the government should be.

My fear is that the average viewer will come away from this program convinced that the scenario is real (after all, why would CNN show something that is not real?) and that only the government can help lead us into a world of peaceful coexistence in cyberspace. As most (hopefully all) of our readers know, cyberspace is very complex and security comes not from just the private sector or just the government but jointly, with each party playing a very important role.

I invite you to watch the program then post your comments or thoughts below using the COMMENT feature.

ps - watch the two maps, the one of the cell phone outages and the one of the electric grid failures. The cell phone maps show "green" where there is 100% operation, including areas of the country where there is no coverage at all. The electric power map is actually a map of the highway system. Watch the highways go dark later in the simulation. I've never seen highways go dark during a power failure (unless it's at night.)

Marcus Sachs

Director, SANS Internet Storm Center

17 Comments

Is "Green IT" Defeating Security?

I was reading my morning newspaper one day this past week (a real treat since my cataract surgeries) and I came upon several articles concerning a local municipality that experienced a self-imposed DOS due to a massive malware infection. The CIO explained that "curiously, only those employees who had turned off their computers at night were infected". Now, in security, we understand fully why this happened and it is not curious at all. This statement causes flashbacks to all the times I have experienced many a cost-conscious "green" dept. heads, with good intentions, requesting their employees to turn off their computers at night to save money and the planet. Hey, I'm as green as the next guy, but at some point, penny pinching and IT just don't mix.

Maybe we aren't explaining this situation well enough, (more likely CIO support for security was non-existent), but it seems to me that the IT security department at this municipality needed to explain to the CIO and advise city employees that the majority of security updating is completed during off hours as to not interfere with production. Yes, we do have ways to kick off updates after the computer is turned on in the morning, but at the same time, we have allowed production requirements to interfere with those updates by allowing the users to stop scans or generally override any security setting which may interfere with the goal of production. That said, our main responsibility must be to keep our domains as up-to-date as possible to combat the barrage of morphing attacks. And we realize even that isn't enough, when that one "green guy" opens an infected PDF file or is redirected to a malware spewing site. A site directing attacks to the third-party software we can't find the budget or time to patch with any regularity.

The recent news of the ZeusBot revelations (not to us) and the whole Google/China mess shows what can happen when employees are not educated about their role in keeping the enterprise secure. Employees must have the "big picture" to be of any help. Counting on updating our AV program is just is not a viable methodology any more. While it is imperative that we keep doing our jobs by keeping definitions as updated as possible, (and prevent over-ride of security settings), we are still back to the subject of application patching. All the glorious AV definitions in the world will not prevent an employee from making that search that redirects, or opening an attachment that starts the proverbial ball rolling toward weeks of clean-up and bad press via media hype.

Maybe the publicity helps our cause. At one point I did believe that. Do you think we are still making in roads with the non-security folks with continuous media exposure? Or is it just possible that the public and our CIO's have come to accept these violations as a way of life? I'd like to hear your comments.

Mari Nichols

Handler on Duty

10 Comments

MS10-015 may cause Windows XP to blue screen (but only if you have malware on it)

Last week we received quite a number of reports that the patch for MS10-015 was causing XP machines to display the dreaded BSOD (http://isc.sans.org/diary.html?storyid=8209). The comments of that diary already suggested that the BSOD may have been related to a rootkit on the machine and it looks like this was correct. If you were infected with the TDL3/TDSS/tidserv AKA Alureon rootkit and applied the patch, then you would get the BSOD as the patch changed some pointers and the malware now tried to execute an invalid instruction.

Lucky for us the malware writers have addressed this issue and it shouldn't happen again for those who are newly infected with this particular piece of malware. A shame really, as it was a convenient way in which to identify infected machines. If you did get the BSOD on your machine or on machines in your organisation, then you should consider the possibility that the machines are infected.

Marco's page (http://www.prevx.com/blog/143/BSOD-after-MS-TDL-authors-apologize.html ) and the Microsoft page (http://blogs.technet.com/mmpc/archive/2010/02/17/restart-issues-on-an-alureon-infected-machine-after-ms10-015-is-applied.aspx) go into the details.

Mark

6 Comments

Cisco Security Agent Security Updates: cisco-sa-20100217-csa

From the advisory, specific CSA versions and components are vulnerable to SQL injection and directory traversal (allowing unauthorized config changes for instance), as well as a DOS (Denial of Service) condition.

Cisco Security Agent releases 5.1, 5.2 and 6.0 are affected by the SQL injection vulnerability. Only Cisco Security Agent release 6.0 is affected by the directory traversal vulnerability. Only Cisco Security Agent release 5.2 is affected by the DoS vulnerability.

Note: Only the Management Center for Cisco Security Agents is affected by the directory traversal and SQL injection vulnerabilities. The agents installed on user end-points are not affected.

Only Cisco Security Agent release 5.2 for Windows and Linux, either managed or standalone, are affected by the DoS vulnerability.

Standalone agents are installed in the following products:

* Cisco Unified Communications Manager (CallManager)

* Cisco Conference Connection (CCC)

* Emergency Responder

* IPCC Express

* IPCC Enterprise

* IPCC Hosted

* IP Interactive Voice Response (IP IVR)

* IP Queue Manager

* Intelligent Contact Management (ICM)

* Cisco Voice Portal (CVP)

* Cisco Unified Meeting Place

* Cisco Personal Assistant (PA)

* Cisco Unity

* Cisco Unity Connection

* Cisco Unity Bridge

* Cisco Secure ACS Solution Engine

* Cisco Internet Service Node (ISN)

* Cisco Security Manager (CSM)

Note: The Sun Solaris version of the Cisco Security Agent is not affected by these vulnerabilities.

The full advisory, including a matrix of vulnerable and fixed versions, can be found here ==> http://www.cisco.com/warp/public/707/cisco-sa-20100217-csa.shtml

=============== Rob VandenBrink Metafore ===============

1 Comments

Cisco ASA5500 Security Updates - cisco-sa-20100217-asa

Tim reports that Cisco has released a security advisory for Cisco ASA5500 products, outlining some security vulnerabilities and resolutions

The issues are:

- TCP Connection Exhaustion Denial of Service Vulnerability

- Session Initiation Protocol (SIP) Inspection Denial of Service Vulnerabilities

- Skinny Client Control Protocol (SCCP) Inspection Denial of Service Vulnerability

- WebVPN Datagram Transport Layer Security (DTLS) Denial of Service Vulnerability

- Crafted TCP Segment Denial of Service Vulnerability

- Crafted Internet Key Exchange (IKE) Message Denial of Service Vulnerability

- NT LAN Manager version 1 (NTLMv1) Authentication Bypass Vulnerability

All issues are resolved by upgrading to an appropriate OS version, outlined in a table in the advisory. If that is not possible, workarounds for many of these issues are also provided.

Most of these are DOS (Denial of Service) conditions, however the authentication bypass issue is much more serious. If your ASA configuration requires NTLMv1 authentication, then read this advisory closely and upgrade to the appropriate OS version as soon as possible ! A workaround that's not referenced in the Cisco doc is changing to RADIUS authentication in place of NTLMv1. If an OS update is not easy to schedule in the near future, this might be a better approach short term (or even long term) than using NTLMv1.

Find the advisory here ==> http://www.cisco.com/en/US/products/products_security_advisory09186a0080b1910c.shtml

=============== Rob VandenBrink Metafore ===============

0 Comments

Multiple Security Updates for ESX 3.x and ESXi 3.x

A number of ESX 3.x security updates are out today. These are released along with updates affecting several operational (non-security) issues.

ESX3.5 update for python packages, that resolves issues with VM Shutdown ESX350-201002402-SG ==> http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=1017663

ESX 3.5 update, involving remote execution via XML, updates to libxml packages, ESX350-201002407-SG ==> http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=1017679

Updates for BIND on ESX 3.5, ESX350-201002404-SG ==> http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=1017679

Updates to resolve a buffer overflow in NTP in ESXi 3.5 (among other things), ESXe350-201002401-I-SG ==> http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=1017685

Updates for SNMP that affect ESX 3.0.3 - 3.5, ESX350-201002401 ==> http://lists.vmware.com/pipermail/security-announce/2010/000080.html and ==> http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=1017660

Full details on all of these, along with the operational patches, can be found at ==> https://www.vmware.com/mysupport/portlets/patchupdate/allpatches.html

*** none of these updates affect the current version vSphere 4.x ***

=============== Rob VandenBrink Metafore ===============

0 Comments

Defining Clouds - " A Cloud by any Other Name Would be a Lot Less Confusing"

So What is Cloud Computing Anyway? Do you find yourself saying "am I the only one that is confused about this?"

The short answer is No, you're not the only one confused about this. Most of the people who put the word "Cloud" in their headline are confused as well (except us of course). Over the last couple of years, the amount of press about "Cloud Computing" has been steadily increasing, as interest in virtualization grows. However, it seems like everyone who writes a "Cloud" article means something different when they use the word, and in many cases it's easy to see some real mis-understanding of key details around some of the virtualization and service

So, let's dive right in - when someone says "Cloud", they generally mean one of several things:

Colocation Services

Colocation Services (often shortened to "Colo") is the oldest service of the bunch, and essentially offers an empty rack with very fast communications, reliable power and air conditioning. Colos are often serviced by several ISP's (Internet Service Providers). Providing server hardware, firewalls and load balancers are the responsibility of the customer. Colo services generally aren't included when people reference "clouds", but they're included here to provide a more complete picture

Host as a Service (HaaS) / Infrastructure as a Service (IaaS)

Most commonly called IaaS, this offering uses virtualization to provide computing cycles, memory and storage. IaaS vendors provide the communications, power and environmental controls of a Colo, but also provide the hardware in the form of a "home" for virtual machines (VMs).

The VMs are provided by the customer, which are uploaded, deployed to the IaaS storage pool and started using a management interface. Because virtualization is used, IaaS clouds can offer enhanced services based on what their virtualization platform has available. For instance, when one VM ramps up it's CPU requirements, other VMs that might be on that same server can automatically migrate to a less busy hardware platform, maintaining a consistent profile of available CPU resources for all customers. Similarly, if maintenance is required on a host or storage platform, VMs can be manually migrated away, ensuring constant uptime, even during hardware maintenance.

Some IaaS vendors have extended their service to include additional services such as database, queueing, backup and remote storage. This reduces the software license costs for the customers, but of course also increases the monthly costs of the IaaS service.

IaaS services typically charge their clients based on Compute Usage, Data transfered in and out, Database requests, Storage used, and Storage requests and transfers. IaaS vendors include Amazon EC2 services ( based on XEN), Rackspace and Terremark (based on VMware's vSphere products).

Computer as a Service (CaaS) or Desktop as a Service (DaaS) are special cases of an IaaS service. These generally referes to VDI (Virtual Device Infrastructure) solutions such as VMware View. These solutions are a special case of an Iaas, but delivering a desktop operating system such as Windows XP, Windows 7 or a desktop Linux OS. These are often used to deliver applications that for one reason or another don't belong on the actual physical desktops in the environment. They might be easier to maintain on the server, there might be bandwidth issues, where the VDI traffic overhead might be substantially less the "fat" application, or regulatory requirements, where the data and application can't live on the remote desktop computer, in case of theft or compromise.

Platform as a Service (Paas)

Platform as a Service takes the environment offered by IaaS, and adds the operating system, patching, and a back-end interface for applications. The customer uploads their application in a standard format, written to use the PaaS interfaces, and the rest is up to the PaaS vendors.For instance, Microsoft's Azure services platform offers .Net Framework and PHP as back-end interfaces, Google App engine has backend interfaces based on Java and Python, and Salesforce.com has proprietary interfaces for business logic and user interface design.

Software as a Service (SaaS)

Software as a Service delivers a fully functional application to the end user, generally with per-user billing.

Google Apps is the latest entry into this field, and seems to have the most press lately - they offer a full office suite including the pre-existing mail (gmail) service. However, lots of other vendors offer SaaS products - Microsoft offers online versions of Exchange, Sharepoint and Dynamics CRM. Salesforce.com has their Salesforce CRM product, and IBM offers LotusLive, which is a hosted Domino environment.

Private Clouds

The thing about all of these "Cloud" architectures we've just described is that none of them are really new. They've all existing in the datacenters of many corporations, running as VMware, XEN, Hyper-V or Citrix servers, and have been there in some cases for 10 years or more. What the new IaaS, PaaS and SaaS services offer is a mechanism to outsource these private infrastructures, generally over the internet. The new functions that these Public Cloud infrastructures bring to the table new management interfaces and most importantly a billing interface.

An interesting thing that we're seeing more and more of lately is internal billing for private cloud services, where production departments within a company are billed by their IT group for use of services in the Datacenter. This has been a slow-moving trend over the last 10 years or so, but with the new API's offered by virtualization platforms, the interfaces required for billing purposes are much more easy to write code for. The goal of these efforts is often a "zero cost datacenter", where production departments actually budget for IT services, rather than IT. While this may be a goal to shoot for, it's not one that is commonly realized today.

=============== Rob VandenBrink Metafore ===============

10 Comments

Teredo "stray packet" analysis

This investigation started with Rick observing some odd UDP traffic hitting his firewall. In this case, the traffic came from 66.55.158.116 port 3544. The destination port was a "random" high port. If you would like to provide your packet captures, see the end of this article for the right filter.

Port 3544 is assigned to Teredo. However, Teredo itself uses this port to establish connections, not necessarily for the actual Teredo tunnel traffic itself. As a host establishes a Teredo connection, it will connect to a Teredo server on port 3544 and negotiated the details of the connection. During this negotiation, a IPv6 address is established for the host.

The IPv6 address used by Teredo will always start with "2001:0000:". This /32 prefix is reserved for Teredo. It is followed by the IPv4 address of the Teredo server, 16 bits worth of "Flags" defining the type of NAT used by the client's network, the UDP port used to connect back to the client and finally the clients public IPv4 address. To illustrated this, here a more "graphical" representation of a Teredo address:

2001:0000:SSSS:SSSS:FFFF:UUUU:CCCC:CCCC S = Server IP address, F = Flags, U = obfuscated UDP port, C = public client IP address

in short: Everything needed to connect back to the client is encoded in the IPv6 address. (also see our IPv6 tool )

The Teredo packet itself is rather simple. The IPv6 packet is embeded in a IPv4 UDP packet. We got an IPv4 header, a UDP header followed by the IPv6 header. Wireshark for example does a nice job in analyzing Teredo traffic.

Now lets go back to Rick's packet trace. If all this is true, then the last 4 bytes of the embeded IPv6 destination address should match the public IPv4 address the packet was sent to. In Rick's case, this wasn't the case. The two addresses didn't match at all.

The only packets Rick saw where "Bubble Packets". Bubble packets are used by Teredo to keep the firewall open. Typically every 30 seconds, the Teredo client will send an empty IPv6 packet to the server. This will extend the connection timeout in most firewalls. For UDP, there is no state like for TCP. In order to preserve the resemblance of state, firewalls assume that if a host sent a packet out, there may be a reply coming back. Teredo takes advantage of this "statefull UDP" feature in modern firewalls.

This is why we would like to connect more of these packets to see if Rick's experience was just a "one off" oddity or if others are seeing the same traffic. Rick did not use Teredo or IPv6 for that matter. We are interested in your packet in particular if you are NOT using IPv6.

You can use tcpdump or windump to collect the traffic. You probably have to collect the traffic outside of your firewall. The tcpdump line is not perfect, and may capture some other UDP traffic (e.g. DNS). Before you send it to us, take a quick look at it to make sure you are not sending us any confidential data.

As a reminder, the tcpdump command line to collect the traffic: tcpdump -c100 -s0 -i any -w /tmp/teredo udp port 3544

------

Johannes B. Ullrich, Ph.D. IPv6 Security Essentials Training

SANS Technology Institute

Twitter

4 Comments

Teredo request for packets

We got an e-mail today from Rick about some really odd UDP traffic he was seeing. We took a look and it looked like Teredo keep-alive traffic (IPv6 tunnels), but Rick wasn't running Teredo. It got some of the handlers wondering, so we're going to ask for packets from those of you who are NOT running Teredo (we don't really want to see your traffic, we're looking for anymore of these weird, apparently misdirected, packets). So, for those that are willing, could you run the following tcpdump command and upload the results to the contact page? We'll post our analysis in a week or two, if we can figure anything out. Thanx in advance.

tcpdump -c100 -s0 -i any -w /tmp/teredo udp port 3544

---------------

Jim Clausing, jclausing --at-- isc [dot] sans (dot) org

SEC 503 coming to central OH beginning 22 Feb, see here

1 Comments

Various Olympics Related Dangerous Google Searches

We have received reports about the (sadly expected by now) search engine poisoning for various Olympics related terms. For example the name of the killed Georgian luge athlete is used to redirect unsuspecting users to fake anti virus and other malicious content. The redirect is browser dependent. Firefox is usually redirected to "qooglesearch.com" (note the 'q' as first letter instead of a 'g'). It is probably advisable to watch out for DNS requests for this domain to spot possible infections. Internet explorer is redirected to a wide range of different domains which apparently are picked at random.

Video of the attack

------

Johannes B. Ullrich, Ph.D. - IPv6 Training

SANS Technology Institute

Twitter

1 Comments

New ISC Tool: Whitelist Hash Database

NIST is publishing a regularly updated set of CDs with hashes for a number of software packages. The "National Software Reference Library" (NSRL) [1] is frequently used for forensics to eliminate unaltered standard files from an investigation. However, I feel that this database also has a lot of use for malware analysis. Anti-malware software usually takes an "enumerate badness" approach in attempting to come up with signatures for all known malware. With the current flood of new malware variants, this approach does not work well anymore.

One problem with the NIST NSRL was that there was no easy way to look up a single hash or file. You could order the CD set or download them, but there was no simple way to just lookup just one hash which is particular useful for malware analysis. Not anymore. We downloaded the database for you, and it is now available to be queried here: http://isc.sans.org/tools/hashsearch.html .

The plan is to add our own hash collections to it. I may also offer a DNS based lookup if there is interest. In order to provide some malware information, I added a lookup against the Team Cymru malware hash database.

How to use this tool

You may search based on filename, sha1 hash or md5 hash. The malware lookup only works for md5 hashes right now. For each search, you may get more then one result back. For example, if you search for "cmd.exe", you will get hashes back for various versions of Windows which include cmd.exe. Same if you enter a hash, and the same binary was used in multiple products.

If you would like to contribute your own hash collection, please let us know. In particular if you have a good Windows 7 hash collection. As the focus of this tool is malware analysis, hashes of executables and libraries are most appreciated. Please contact us via http://isc.sans.org/contact.html to discuss details. Hashes contributed by sources other then NIST will be marked clearly as they may not live up to the exacting standards of NIST.

[1] http://www.nsrl.nist.gov/

[2] http://www.team-cymru.org/Services/MHR/

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

0 Comments

Rogue DHCP server fun

8 Comments

Network Traffic Analysis in Reverse

Most of the time, people focus on what is coming inbound toward their networks. This is quite understandable as the threat is usually considered outside of our perimeter and trying to come into our networks. However, looking at traffic in this fashion is sometimes very tedious. There is alot that can get lost in the noise, especially if the analysis is done at the network edge. There is just so much "background noise" on the internet such as port scans, old malware lingering around, network probes, etc. There is alot to filter through.

An interesting exercise is do an analysis on your outbound traffic. Many organizations do not do good egress filtering. If you have never done this, then do some trend analysis on your egress traffic only. In all that noise of traffic destined toward your network, what you really want to know is did a system answer? Do you really know where your internal systems connecting to? On what ports? Why?

I am not saying that you shouldn't watch traffic destined for your network, but you should spend some quality time analyzing the traffic leaving your network. I would expand this to include traffic flows between your internal systems. If you have never done this, you might be surprised at what you find.

1 Comments

Time to update those IP Bogon Filters (again)

Looks like the Internet Assigned Numbers Authority (IANA) has recently assigned two more IP prefixes to the American Registry for Internet Numbers (ARIN):

50.0.0.0/8 and 107.0.0.0/8

For those of you that maintain IP Bogon Filters, you may want to adjust your filters accordingly.

G. N. White

ISC Handler on Duty

1 Comments

Critical Update for AD RMS

We received an email from on of our readers today with a link to a MSDN Blog. The article contains information about a required update for Active Directory Rights Management Services.

The article states that the update prevents error messages that are related to the application manifest expiry feature of AD RMS or RMS client and server applications.

The certificate for the RMA add-on for Internet Explorer will expire on February 22nd. The article states that this add-on allows users to view content with restricted permissions in

Internet Explorer. Failure to apply the update may cause issues with accessing or protecting web-based content.

If you are using AD RMS you may want to take a closer look at the article.

Deb Hale Long Lines, LLC

0 Comments

The Mysterious Blue Screen

I am going to learn not to sign up for Handler On Duty any day of the Microsoft Update week. It never fails there are issues to be dealt with.

Today the issues to be dealt with are internal to my company. We got to work this morning to discover that we had a number of computers

that would not boot up. They had the infamous "Blue Screen of Death". The file that was indicated as the problem is a file totally none related

to Microsoft. The file is a kernel level file for an anti-virus program that we have been using internally for quite some time. The AV uses a CLAM-AV engine

and a few other "interfaces" to package a computer security solution.

After attempting to contact the company today and getting voice mail for both the tech support and partner support lines I figured that this was a bigger

problem than what I was seeing. I did finally get a call back from the company as well as a couple of emails indicating that the problem was a result

of the Microsoft updates. This really puzzles me because most of our machines are setup to NOT download and install the updates for this very reason. We

prefer to wait a few days after the update is released before we actually install. We prefer to wait to see if there are problems and give Microsoft an opportunity

to fix it before it breaks computers.

So my question is: "Did Microsoft force an update despite our auto updates being turned off?" I have verified that the majority of the computers APPEAR to

have not had the patches applied.

I have present this question to Microsoft and have no answer back yet. As soon as I do I will update.

The good news is that in our case it was pretty easy to get our machines back online. We just had to boot to a repair disc and remove the driver file (.sys) that

was causing the blue screen. Once the file was removed a reboot in every case returned the computer to normal.

Any one else noticed problems on machines with auto-update turned off?

UPDATE: I have been in contact with Microsoft and they have insured me that there were no updates done outside of their normal updates. They said that if the

Auto Update was turned off - then NO updates were done. So the plot thickens. How is it that NO updates were done either by the software vendor or by Microsoft

and yet the machines Blue Screened. Just what is it that happened to our Windows XP and Windows Vista machines that rendered them blue. I will update

again as soon as more information becomes available from either Microsoft or the Vendor.

Deb Hale Long Lines, LLC

8 Comments

MS10-015 may cause Windows XP to blue screen

We have heard about reports that MS10-015 causes some Windows XP machines to blue screen. If you are seeing this issue, please let us know.

(I am filling in for Deborah on this diary as she is ironically busy dealing with lots of blue screens in her organization, which may be related)

http://www.krebsonsecurity.com/2010/02/new-patches-cause-bsod-for-some-windows-xp-users/

and

http://social.answers.microsoft.com/Forums/en-US/vistawu/thread/73cea559-ebbd-4274-96bc-e292b69f2fd1

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

21 Comments

Datacenters and Directory Traversals

We got a couple of interesting emails late in the shift today so I thought I'd lump them into one diary.

Tommy asked, "What happens when a SANS taught security guy builds a datacenter?" You have to see this to believe it. He used a former class III safety deposit bank vault and put photos of the construction online at http://www.tylervault.com/how.htm. Nice job!

Ron told us that he "wrote an Nmap script this week to detect a VMWare vulnerability, CVE-2009-3733. It's a nasty one because it's trivial to exploit and potentially incredibly damaging (you can download any file from the filesystem)." The details of the vulnerability were released last weekend at Shmoocon. It's a directory traversal issue - remember them? I thought we figured out ten years ago that this was a Bad ThingTM. I guess VMWare didn't get the message. Ron's Nmap script and a description of the issue is at

http://www.skullsecurity.org/blog/?p=441.

Marcus H. Sachs

Director, SANS Internet Storm Center

0 Comments

Twitpic, EXIF and GPS: I Know Where You Did it Last Summer

Modern cell phones frequently include a camera and a GPS. Even if a GPS is not included, cell phone towers can be used to establish the location of the phone. Image formats include special headers that can be used to store this information, so called EXIF tags.

In order to test the prevalence of these tags and analyze the information leaked via EXIF tags, we collected 15,291 images from popular image hosting site Twitpic.com. Twitpic is frequently used together with Twitter. Software on smart phones will take the picture, upload it to twitpix and then post a message on Twitter pointing to the image. Twitpic images are usually not protected and open for all to read who know the URL. The URL is short and incrementing, allowing for easy harvesting of pictures hosted on Twitpic.

We wrote a little script to harvest 15,291 images. A second script was used to analyze the EXIF information embedded in these images. About 10,000 of the images included basic EXIF information, like image resolution and camera orientation. 5247 images included the Camera Model.

Most interestingly: 399 images included the location of the camera at the time the image was taken, and 102 images included the name of the photographer. Correlating the camera model with the photographer field, we found that it was predominantly set for the Canon and Nikon cameras. Only few camera phones had the parameter set.

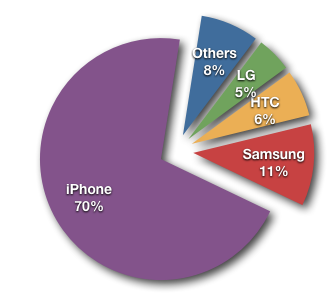

GPS coordinates where only set for phones, with one single exception (a Nikon Point and Shoot camera, which does not appear to come with a build in GPS. The location may have been added manually or by an external GPS unit). The lion share of images that included GPS tags came from iPhones.

The iPhone is including the most EXIF information among the images we found. The largest EXIF data set we found can be found here. It not only includes the phone's location, but also accelerometer data showing if the phone was moved at the time the picture was taken and the readout from the build in compass showing in which direction the phone was pointed at the time.

Figure 1: Pictures with GPS coordinates broken down by Phone manufacturer.

Figure 2: Geographic Distribution of Images

Now the obvious question: Anything interesting in these pictures? The images all the way up north shows an empty grocery store (kind of like in the DC area these days). The picture at the Afghan - Pakistan border shows a pizza... Osama got away again I guess.

The scripts used for this can be found here: http://johannes.homepc.org/twitscripts.tgz (two scripts, also needs "exiftools" to pull out the data).

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

13 Comments

Vulnerability in TLS/SSL Could Allow Spoofing

Microsoft released a bulletin yesterday about a potential problem in TLS/SSL that could allow spoofing. From their bulletin:

Microsoft is investigating public reports of a vulnerability in the Transport Layer Security (TLS) and Secure Sockets Layer (SSL) protocols. At this time, Microsoft is not aware of any attacks attempting to exploit the reported vulnerability.

As an issue affecting an Internet standard, we recognize that this issue affects multiple vendors. We are working on a coordinated response with our partners in the Internet Consortium for Advancement of Security on the Internet (ICASI). The TLS and SSL protocols are implemented in several Microsoft products, both client and server, and this advisory will be updated as our investigation continues.

As part of this security advisory, Microsoft is making available a workaround which enables system administrators to disable TLS and SSL renegotiation functionality. However, as renegotiation is required functionality for some applications, this workaround is not intended for wide implementation and should be tested extensively prior to implementation.

Upon completion of this investigation, Microsoft will take the appropriate action to protect our customers, which may include providing a solution through our monthly security update release process, depending on customer needs.

More details are in their bulletin and we'll let you know if we hear anything more. We have not received any reports of in-the-wild exploitation of this potential vulnerability.

Thanks, Cheryl, for bringing this to our attention!

Marcus H. Sachs

Director, SANS Internet Storm Center

2 Comments

February 2010 Black Tuesday Overview

Overview of the February 2010 Microsoft patches and their status.

| # | Affected | Contra Indications | Known Exploits | Microsoft rating | ISC rating(*) | |

|---|---|---|---|---|---|---|

| clients | servers | |||||

| MS10-003 | Vulnerability in Microsoft Office (MSO) Could Allow Remote Code Execution (Windows and OS X) (Replaces MS09-062) | |||||

| Office CVE-2010-0243 |

KB 978214 | no known exploits. | Severity:Important Exploitability: 1 |

Critical | Important | |

| MS10-004 | Vulnerabilities in Microsoft Office PowerPoint Could Allow Remote Code Execution (Windows and OS X) | |||||

| Powerpoint CVE-2010-0029 CVE-2010-0030 CVE-2010-0031 CVE-2010-0032 CVE-2010-0033 CVE-2010-0034 |

KB 975416 | no known exploits. | Severity:Critical Exploitability: 2,1,1,1,1,1 |

Critical | Important | |

| MS10-005 | Vulnerability in Microsoft Paint Could Allow Remote Code Execution | |||||

| Microsoft Paint CVE-2010-0028 |

KB 978706 | no known exploits. | Severity:Moderate Exploitability: 2 |

Critical | Moderate | |

| MS10-006 | Vulnerabilities in SMB Client Could Allow Remote Code Execution (Replaces MS06-030 MS08-068 ) | |||||

| SMB CVE-2010-0016 CVE-2009-0017 |

KB 978251 | no known exploits. | Severity:Critical Exploitability: 2,1 |

Critical | Critical | |

| MS10-007 | Vulnerability in Windows Shell Handler Could Allow Remote Code Execution | |||||

| ShellExecute API CVE-2010-0027 |

KB 975713 | no known exploits. | Severity:Critical Exploitability: 1 |

Critical | Important | |

| MS10-008 | Cumulative Security Update of ActiveX Kill Bits (Replaces MS09-055) | |||||

| ActiveX CVE-2010-0252 |

KB 978262 | no known exploits. | Severity:Critical Exploitability: ? |

Critical | Important | |

| MS10-009 | Vulnerabilities in Windows TCP/IP Could Allow Remote Code Execution | |||||

| IPv6 CVE-2010-0239 CVE-2010-0240 CVE-2010-0241 CVE-2010-0242 |

KB 974145 | no known exploits. | Severity:Critical Exploitability: 2,2,2,3 |

Critical | Critical | |

| MS10-010 | Hyper-V Instruction Set Validation Vulnerability | |||||

| Hyper-V CVE-2010-0026 |

KB 977894 | no known exploits. | Severity:Important Exploitability: 3 |

Important | Important | |

| MS10-011 | Vulnerability in Windows Client/Server Run-time Subsystem Could Allow Elevation of Privileges | |||||

| CSRSS CVE-2010-0023 |

KB 978037 | no known exploits. | Severity:Important Exploitability: 1 |

Important | Important | |

| MS10-012 | Vulnerabiliites in SMB Server Could Allow Remote Code Execution (Replaces MS09-001) | |||||

| SMB Server CVE-2010-0020 CVE-2010-0021 CVE-2010-0022 CVE-2010-0231 |

KB 971468 | no known exploits. | Severity:Important Exploitability: 2,2,3,1 |

Important | Critical | |

| MS10-013 | Vulnerability in Microsoft DirectShow Could Allow Remote Code Execution MS09-038 (Replaces MS09-038 MS09-028 ) | |||||

| DirectShow CVE-2010-0250 |

KB 977935 | no known exploits. | Severity:Critical Exploitability: 1 |

Critical | Important | |

| MS10-014 | Vulnerability in Kerberos Could Allow Denial of Service | |||||

| Kerberos CVE-2010-0035 |

KB 977290 | no known exploits. | Severity:Important Exploitability: 3 |

Important | Important | |

| MS10-015 | Vulnerabilities in Windows Kernel Could Allow Elevation of Privilege | |||||

| Windows Kernel CVE-2010-0232 CVE-2010-0233 |

KB 977165 | exploit available | Severity:Important Exploitability: 1,2 |

Important | Important | |

We appreciate updates

US based customers can call Microsoft for free patch related support on 1-866-PCSAFETY

- We use 4 levels:

- PATCH NOW: Typically used where we see immediate danger of exploitation. Typical environments will want to deploy these patches ASAP. Workarounds are typically not accepted by users or are not possible. This rating is often used when typical deployments make it vulnerable and exploits are being used or easy to obtain or make.

- Critical: Anything that needs little to become "interesting" for the dark side. Best approach is to test and deploy ASAP. Workarounds can give more time to test.

- Important: Things where more testing and other measures can help.

- Less Urgent: Typically we expect the impact if left unpatched to be not that big a deal in the short term. Do not forget them however.

- The difference between the client and server rating is based on how you use the affected machine. We take into account the typical client and server deployment in the usage of the machine and the common measures people typically have in place already. Measures we presume are simple best practices for servers such as not using outlook, MSIE, word etc. to do traditional office or leisure work.

- The rating is not a risk analysis as such. It is a rating of importance of the vulnerability and the perceived or even predicted threat for affected systems. The rating does not account for the number of affected systems there are. It is for an affected system in a typical worst-case role.

- Only the organization itself is in a position to do a full risk analysis involving the presence (or lack of) affected systems, the actually implemented measures, the impact on their operation and the value of the assets involved.

- All patches released by a vendor are important enough to have a close look if you use the affected systems. There is little incentive for vendors to publicize patches that do not have some form of risk to them

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

IPv6 Fundamentals: IPv6 Security Training

1 Comments

When is a 0day not a 0day? Samba symlink bad default config

When is a 0day not a 0day? When the exploit ends up being just a poor default configuration issue. It can lead to files being read, that the user has permission to read. Like /etc/passwd for example. The solution? Set "wide links = no" in the [global] section of your smb.conf and restart smbd to eliminate this problem, from the Samba Symlink Attack posting here. Thanks Elazar!

Cheers,

Adrien de Beaupré

Intru-shun.ca Inc.

0 Comments

When is a 0day not a 0day? Fake OpenSSh exploit, again.

When is a 0day in OpenSSH not a 0day? When it's local exploit code. Not the kind that exploits a vulnerability in the system you are logged into, to escalate privilege for example. The kind that takes advantage of potential vulnerabilities in the gray matter between your ears to make a mess of your local system. A reader wrote in to advise us of a potential 0day in the current version of OpenSSH 5.3/5.3p1 released Oct 1, 2009. He provided a link to a blog post which has what appears to be exploit code. Unfortunately the first thing I did, before I looked at the code, was fire off an email to the OpenSSH list. They responded quite quickly that "It's pretty clear that the code just exploits your local machine...". Woops. A follow up email says "Looks like a rehash of the fake "exploit" from last July." So, the good news is, there does not appear to be a 0day on OpenSSh making the rounds. The bad news is, if you ran the code you are rebuilding your system. Worse still, if you emailed all your friends pointing to the 'exploit' code, well, now you look rather foolish.

Lesson one to me, always check things out.

Do the research and analysis before crying wolf. Fortunately no harm done. This has to be balanced against the requirement for timeliness of information flow along a contact tree. In this case I erred on the side of alerting quickly.

A quick look at the C code and it does appear to run an exploit. The hex at the beginning could be shell code. Part of it looks like this:

char jmpcode[] =

"x72x6Dx20x2Dx72x66x20x7ex20x2Fx2Ax20x32x3ex20x2f"

"x64x65x76x2fx6ex75x6cx6cx20x26";

Which starts to looks familiar when run through an online Hex to ASCII decode:

?????r?m? ?-?r?f? ?~? ?/?*? ?2?>? ?/??????d?e?v?/?n?u?l?l? ?&?

When you strip out and clean it up it looks like this:

"rm -rf ~ /* 2> /dev/null &"

That can't be good. On a lot of Linux or Mac OS X systems, if run as root, your hard drive would be pretty active as it tries to delete everything, if not root it just deletes your home directory. Other chunks of the 'code' are a perl script to join IRC.

Lesson two, just because it looks like shell code, a buffer overflow, or assembly language, doesn't mean it is.

Lesson three is also fairly obvious, make certain you know what the code does before you run it.

Back to the blog post for a second, assuming that the poster didn't know what it was, and thought it was in fact a 0day in OpenSSH, perhaps they could have performed a bit of checking prior to posting online. Although it could also have been posted as a prank or practical joke.

Lesson four, just because something is posted online does not mean anyone has actually checked the facts or performed QA on the code.

The last point would be to first inspect the code, then to run it only in a throw away VM or sandbox.

Thanks to Sander for the original alert, and to Niels and others at OpenSSH for pointing out that this is an old 'sploit, and the underlying shell script.

Cheers,

Adrien de Beaupré

EWA-Canada.com

1 Comments

Mandiant Mtrends Report

Once again a lazy weekend to catch up on some reading. One of the items that came across my email in the last week is the Mandiant Mtrends report.

Mtrends is a fairly concise report on Mandiant's view of the Advanced Persistent Threat (APT). If you are not familiar with the term, APT refers to organized groups of professional hackers who have been targeting corporations and governments around the world. Mandiant has a unique perspective into this issue as one of few incident handling companies who have been on the front lines of the fight against the APT.

It does require registration to get your copy, but it is a good read.

I have my views on this report, but for those of you who take the time to read this report I would be very interested in your view of this threat, and Mandiant's report. In your view is this a realistic appraisal of the situation, or just more FUD (Fear, Uncertainty, and Doubt) added to the pile? Please provide your feedback via commenting to this diary or through our contact page.

-- Rick Wanner - rwanner at isc dot sans dot org

2 Comments

LANDesk Management Gateway Vulnerability

LANDesk has released a security fix for a vulnerability reported for the LANDesk Management Gateway which under certain conditions, will allows an attacker to perform command injection. This could lead to arbitrary commands to be executed under the root context. A fix has been made available and the original advisory posted here.

Affected versions:

LANDesk management Gateway Appliance 4.0-1.48 & 4.2-1.8

-----------

Guy Bruneau IPSS Inc. gbruneau at isc dot sans dot org

0 Comments

Oracle WebLogic Server Security Alert

Oracle issued a Security Alert that address a vulnerability in the Node Manager component of Oracle WebLogic Server (CVE-2010-0073).

According to Oracle, "This vulnerability may be remotely exploitable without authentication. A knowledgeable and malicious remote user can exploit this vulnerability which can result in impacting the availability, integrity and confidentiality of the targeted system." Oracle strongly recommends testing and apply this fix as soon as possible. Additional information is available here.

The list of affected product:

Oracle WebLogic Server 11gR1 releases (10.3.1 and 10.3.2)

Oracle WebLogic Server 10gR3 release (10.3.0)

Oracle WebLogic Server 10.0 through MP2

Oracle WebLogic Server 9.0, 9.1, 9.2 through MP3

Oracle WebLogic Server 8.1 through SP6

Oracle WebLogic Server 7.0 through SP7

-----------

Guy Bruneau IPSS Inc. gbruneau at isc dot sans dot org

0 Comments

Memory Analysis - time to move beyond XP

One of my interests for the last couple of years has been memory analysis especially for use in malware analysis. I've mentioned the volatility framework in previous diaries, and I use it for nearly all of my memory analysis of WindowsXP systems, but I've recently begun thinking about what tools I need in order to do similar analysis on Mac OS X machines. So, I was thrilled when I saw that Matthieu Suiche (of windd fame) was doing a talk at BlackHat-DC on Mac OS X memory analysis. The slides are now available and can be found here, and the whitepaper here. A pretty nice read.

---------------

Jim Clausing, jclausing --at-- isc [dot] sans (dot) org

SEC 503: Intrusion Detection In-Depth coming to central OH beginning 22 Feb, http://www.sans.org/mentor/details.php?nid=20864

0 Comments

WordPress iframe injection?

One of the things we seem to harp on here at the SANS Internet Storm Center is monitoring your logs. One of our faithful readers, Neal, sent us an e-mail this afternoon regarding some strange entries he found in his Apache logs (see below) and some rumblings of a number of WordPress blogs being compromised. He was in contact with one of the affected bloggers and they figured out that the compromise resulted in the injection of some obfuscated javascript that created a hidden iframe. We haven't heard exactly what the vulnerability was that was exploited, but if the log entries are actually related there may be a permission problem or perhaps some sort of SQL injection issue with joomla or the tinymce editor (at least, that is what the log entries showed that someone is looking for). If any of our readers have info on what the vulnerability is (a Google search didn't show anything recent for tinymce, there was a Joomla vulnerability reported in January but the exploits I've seen didn't touch license.txt), please drop us a line and we will update this diary. The particular log entry that caught Neal's attention was

GET /joomla/plugins/editors/tinymce/jscripts/tiny_mce/license.txt

So you may want to be on the lookout for those in your own logs.

---------------

Jim Clausing, jclausing --at-- isc [dot] sans (dot) org

SEC 503: Intrusion Detection In-Depth coming to central OH beginning 22 Feb, http://www.sans.org/mentor/details.php?nid=20864

4 Comments

Dealing with User 2.0

Computing has been around for a while and security has grown with it over the last few decades. Increasingly however I'm coming across User 2.0 and I am betting that you are as well. They bring their own particular security challenges that we need to start solving in order for our organisations to grow and compete in the User 2.0 world.

Some of us who are a little bit worn around the edges will remember User 0.1. The world was good. Users had nice green screens in front of them, they could type only those bits that the application needed and securing the environment was a cinch. Well relatively, the mainframe required you to manage users and give access to resources using RACF, ACS2 or even Topsecret. It was however, for most of us, not a very connected word and User 0.1 happily lived in this green glowing environment. They even still knew how to write using a pen and paper!

Then something horrible happened, these new fan dangled things called "personal computer" started to make an appearance. Even worse people realised that if students and the military could have computers talking to each other, then why couldn't they? This is where it started to get trickier for us Security folks. Many of us grew up in mainframe or unix environments and with a few exceptions these were tightly controlled. User 0.5 was born and demanded connectivity from their new PC to the old world of Unix and Mainframes.

User 1.0 came along when businesses started to connect to the internet and conduct business on the internet. Many User 1.0 were upgraded from User 0.1 or 0.5, so they had an almost automatic acceptance of the restrictions and limitations that we as security folks placed on them. A standard desktop environment, with standard applications that cannot be changed. Corporate computers issued to staff, firewalls, content filtering etc, etc, etc.

Security groups also changed their approach over time. Where many initially started as the "thou shalt" people with User 0.1, with User 0.5 they added "nay" to their vocabulary. There were strict controls in place and the usual answer to many requests where security was involved was "NAY". Thankfully this phase didn't last long and with understandable exceptions, most security groups changed their approach and started working with the business rather than against it (Darwin eat your heart out). So today we see most security groups working with the business. With User 1.0 security groups have learned new words "Yes we can, but only if you use this and this and this". But that is ok, User 1.0 isn't giving security groups that hard a time. They are willing to use the applications they have been given. They will learn the tools when they move from company to company. Business objectives are being met and security groups are helping to achieve this. However much of this really does still depend on having standard applications, used by all, few exceptions. There is still relatively tight control over the environment. Yes we have to let things through our firewalls and filters that a few short years ago we would have denied, but they can be managed.

The User 1.0 era however is drawing to an end, they are slowly being upgraded, although not all of them will be fully upgradable to User 2.0 or beyond and a new user has arrived, User 2.0. User 2.0 or Gen Y as some people like to call them are the digital generation and many businesses including their security teams are struggling to deal with them. User 2.0 grew up digitally, vinyl is something that is on the floor, rotary phones is something you see in old movies, and a walkman is someone that takes the dog for its run.